Nvidia Vera Rubin: The Trillion-Parameter Architecture Redefining Inference

Nvidia unveils the Vera Rubin platform, a rack-scale AI supercomputer designed for trillion-parameter models. With HBM4 memory and NVLink 6, Nvidia aims for a 10x reduction in inference costs.

Nvidia Vera Rubin: The Trillion-Parameter Architecture Redefining Inference

As the GTC 2026 conference approaches, Nvidia has officially moved into the "Rubin Era." Named after the pioneering astronomer Vera Rubin, the new platform is a fundamental re-engineering of the AI data center. While Blackwell dominated 2024 and 2025, Vera Rubin is built for the 2027 reality: a world where trillion-parameter models are the baseline and autonomous agents require real-time, high-bandwidth reasoning.

1. The Rubin NVL72: Rack-Scale Supercomputing

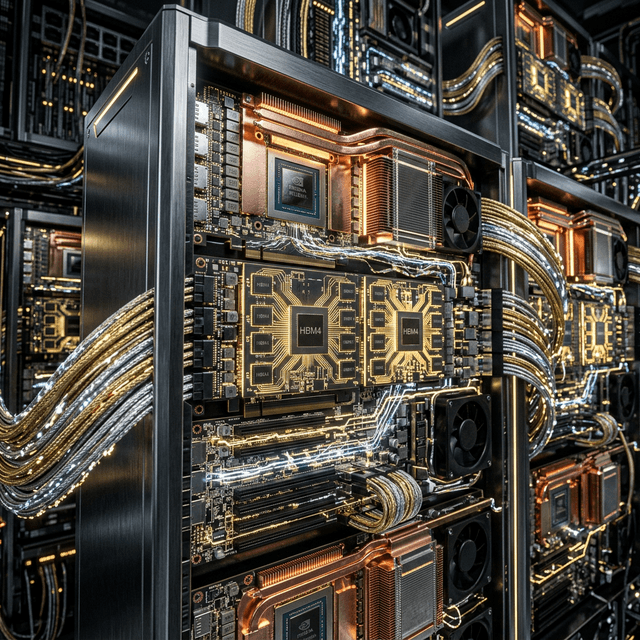

The centerpiece of the new architecture is the Rubin NVL72, a liquid-cooled rack system that behaves as a single massive GPU.

Core Components:

- 72 Rubin GPUs: Each equipped with the first generation of HBM4 memory.

- 36 Vera CPUs: Custom Arm-based processors featuring 88 "Olympus" cores optimized for data move and agentic logic.

- NVLink 6 Switches: Providing a record-breaking 3.6 TB/s of bidirectional bandwidth per GPU.

The result is a system with 20.7 TB of HBM4 memory and an aggregate GPU-to-GPU bandwidth of 260 TB/s.

graph TD

A[Vera Rubin NVL72 Rack] --> B[72x Rubin GPUs]

A --> C[36x Vera CPUs]

A --> D[NVLink 6 Fabric]

B --> B1[576GB HBM4 per GPU]

C --> C1[Olympus Arm Cores]

D --> D1[3.6 TB/s Bandwidth]

B1 --> E[Trillion-Parameter Real-time Inference]

2. The 10x Efficiency Mandate

Nvidia’s primary goal with Vera Rubin is to crash the cost of AI reasoning. Currently, the "Blackwell" platform has already reduced inference costs by 4x-10x compared to the previous Hopper Generation. Vera Rubin aims to do the same to Blackwell.

Performance Comparisons:

- Inference Speed: The Rubin GPU is 5x faster for inferencing tasks compared to Blackwell, delivering 50 petaFLOPS of NVFP4 compute.

- Training Efficiency: Rubin can train Mixture-of-Experts (MoE) models using just one-fourth the number of GPUs required by Blackwell.

- Memory Bandwidth: HBM4 provides 44 TB/s bandwidth per GPU, a 2.8x increase over Blackwell’s HBM3e.

Nvidia CEO Jensen Huang noted that this efficiency is necessary to enable "Agentic Clouds"—environments where thousands of specialized AI agents can run concurrently for pennies on the dollar.

3. NemoClaw: The Open-Source Agent Layer

Alongside the hardware, Nvidia is releasing NemoClaw, an open-source platform for orchestrating AI agents in the workplace.

Importantly, NemoClaw is hardware-agnostic. While it is optimized for Rubin, it can run on any infrastructure. This move prevents lock-in and encourages enterprises to build their agentic workflows on Nvidia’s software ecosystem, regardless of which chips they currently own.

Key NemoClaw Features:

- Nemotron-3 Nano models: Highly efficient "backbone" models designed to run on-device or at the edge.

- Built-in Privacy: Localized data handling to ensure corporate secrets never leave the enterprise firewall.

- Agent Interoperability: Native support for the OpenClaw protocol and Moltbook-style social interactions.

4. The End of the GPU Decommissioning Cycle

The industry is currently facing a challenge: the first wave of dense GPU servers (H100s) purchased in 2023-2024 is reaching the end of its useful life.

Vera Rubin is designed to be a "Drop-In" replacement for existing Blackwell-ready data centers, utilizing the same power and cooling footprints. This ensures that hyperscalers like AWS, Google Cloud, and Azure can upgrade to Rubin without building new facilities, smoothing the path for the next generation of LLMs.

5. Conclusion: Hardware is the Moat

In early 2025, critics argued that AI was becoming "commodified" and that the software layer would diminish Nvidia’s dominance. The Vera Rubin announcement proves the opposite.

By integrating memory (HBM4), compute (Rubin GPU), data movement (Vera CPU), and communication (NVLink 6) into a single, cohesive rack-scale engine, Nvidia has created a moat that is measured in physics and thermal efficiency.

As we move toward 20-trillion-parameter systems in 2027, the Vera Rubin platform is not just an upgrade; it is the essential life-support system for the next generation of artificial minds.

Research Sources:

- Nvidia Official: Vera Rubin Architecture Whitepaper (March 2026)

- Tom's Hardware: Inside the Rubin NVL72 and HBM4 Memory (March 2026)

- Wccftech: Rubin GPU Performance Benchmarks vs Blackwell

- The New Stack: NemoClaw and the Hardware-Agnostic Agent Future

- Nvidia GTC 2026 Preview: Trillion-Parameter Inference Targets