Google I/O's AI-First Run-Up Shows Developer Platforms Are Becoming Agent Platforms

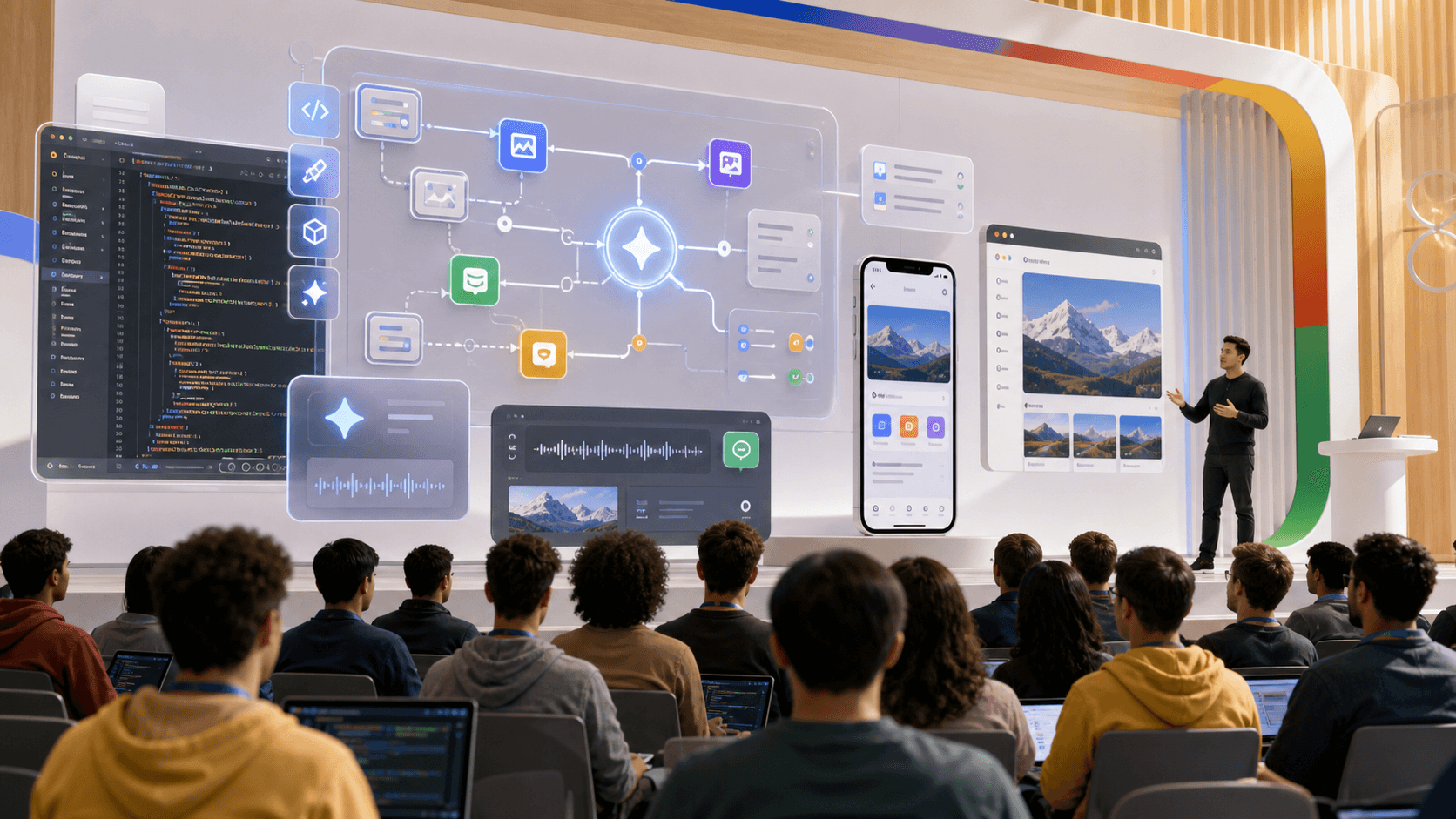

Google's Android Show and I/O previews point to a developer platform shift where Gemini-powered agents become product infrastructure.

Google I/O has always been a developer conference, but the run-up to the 2026 event makes the developer part feel different. The platform is no longer just Android, Chrome, Cloud, and APIs. It is increasingly an agentic layer that helps assemble work across them.

Google has confirmed I/O 2026 for May 19 and 20, while its Android Show preview and I/O materials point toward Gemini-powered, agentic features across Android and developer workflows. TechCrunch reported on May 12 that Google showed Gemini Intelligence features capable of handling multistep actions, while Google's own I/O materials emphasize AI-powered developer experiences and company-wide AI updates.

Sources: Google I/O 2026 save the date, Google on the I/O AI-powered puzzle, TechCrunch on Android Show AI features, Tom's Guide I/O preview, Android Authority schedule analysis.

The architecture in one picture

graph TD

A[Google I/O 2026] --> B[Gemini developer tools]

A --> C[Android agentic features]

A --> D[Chrome and Cloud integrations]

B --> E[Natural-language app assembly]

C --> F[Multistep user actions]

D --> G[Platform distribution]

E --> H[Agent platform shift]

F --> H

G --> H

| Developer platform era | Main interface | New risk |

|---|---|---|

| API era | Code calls platform services | Integration complexity |

| App-store era | Apps reach users through distribution | Policy and review bottlenecks |

| Copilot era | AI assists development | Code review debt |

| Agent era | AI acts across apps and context | Permission, trace, and recovery design |

The keynote is becoming an operating-system story

The old developer-platform story was about SDKs, APIs, devices, and distribution. Those still matter, but AI changes the center of gravity. If Gemini can reason across apps, generate widgets, summarize context, and automate multistep actions, the platform is no longer only where developers ship. It becomes part of how software behaves after it ships.

The operating pattern underneath the headline

The useful way to read this story is not as a single announcement. It is a pressure test for how AI moves from a demo into an institution. That shift sounds abstract until it lands inside an actual workflow. Then the question becomes less glamorous and much more important: who owns the system, who pays for it, who audits it, who can stop it, and who knows when it is wrong.

For Google's AI-first developer platform, the visible headline is only the first layer. The deeper layer is agent permissions and product assembly workflows. That is the dependency serious teams should track. AI is no longer just an application that employees open in a tab. It is becoming a way to reorganize labor, capital, infrastructure, software delivery, robotics, and public policy. When a technology reaches that point, the deployment surface becomes as important as the model.

That is why this moment is awkward for executives. Most organizations learned to buy software by asking whether the tool improved an existing task. AI forces a different question: does the organization itself need to change before the tool can deliver value. A developer platform strategist can pilot a model in a week, but turning that model into durable leverage requires budget rules, procurement discipline, risk ownership, data boundaries, review paths, and a vocabulary for deciding where automation belongs.

The hidden risk is shipping agentic features before developers can reason about their contracts. It is tempting to treat that as a cultural problem or a communications problem. It is more than that. It is an architecture problem. Systems that lack clear boundaries eventually create trust failures, even when the underlying model is capable. Employees distrust invisible monitoring. Communities distrust opaque data-center deals. Developers distrust AI tools that create review debt. Customers distrust agents that cannot explain what changed. Regulators distrust compliance paperwork that does not connect to product behavior.

Why May 2026 feels different

The AI market has passed the stage where every new capability feels magical. That does not mean the technology is less important. It means the audience has become harder to impress. Buyers have seen copilots. Workers have seen productivity experiments. Developers have seen agent demos. Regulators have seen policy pledges. Infrastructure planners have seen data-center demand forecasts. The bar has moved from possibility to proof.

Proof is harder than a launch video. It asks whether the system works after onboarding, after a policy exception, after a security review, after a missed deadline, after the model changes, after a new compliance rule, after a customer complains, and after the first incident. That is the difference between a technology trend and an operating model.

The companies that understand this will not necessarily move slowly. They will move deliberately. They will start with narrower workflows, clearer owners, better evidence, and cleaner rollback. They will treat AI as a capability that has to be placed, not a magic layer to smear across every process. They will be willing to say no to impressive demos that do not have an accountability surface.

The companies that miss it will keep confusing adoption with transformation. They will count seats, prompts, generated files, and model calls. Those numbers can be useful, but they do not prove much by themselves. The better measurements are less flashy: error rate after review, time saved after correction, percentage of workflows with named owners, reduction in queue backlog, quality of audit trails, employee trust, power-delivery certainty, and the ability to explain why an AI-assisted decision happened.

The questions leaders should ask now

The practical questions are simple, but they are not easy.

- What is the first workflow where this actually changes behavior.

- Which human review step becomes more important rather than less important.

- Which dependency becomes more concentrated if adoption succeeds.

- What evidence would prove the system is working after the first month.

- What failure would make the organization pause expansion.

- Which team has the authority to say the system is not ready.

These questions matter because AI changes the boundary between tool and institution. A spreadsheet changed office work, but it did not usually act on behalf of the company. A traditional SaaS tool automated defined steps, but it did not usually reinterpret the task. AI systems can summarize, infer, recommend, generate, plan, and in some cases act. That range is useful precisely because it is dangerous to leave unmanaged.

What builders should copy

Builders should copy the discipline, not the hype. The lesson is to design AI systems around reviewable work. Make inputs visible. Make sources inspectable. Make confidence and uncertainty part of the interface. Preserve the trace. Let humans correct the system without fighting it. Keep permissions narrow until the system earns broader scope. Measure outcomes after review, not raw output before review.

For product teams, that means the boring features are the differentiators. Audit logs, access controls, version history, exportable evidence, permission boundaries, policy configuration, and cost attribution will decide which AI systems survive enterprise deployment. The model may open the door, but operations decide who stays in the building.

For leaders, the lesson is similar. AI strategy is not a deck about disruption. It is a portfolio of specific operating changes with named owners. Each one should state what task changes, what risk changes, what metric changes, and what human judgment remains essential. Without that specificity, the organization is not transforming. It is rehearsing a talking point.

Agentic features create new developer contracts

Developers need to know what an agent can see, what it can change, how permissions work, how actions are logged, and how users can recover from mistakes. A model-powered feature is not just a UI enhancement. It is a contract between the app, the platform, the user, and the AI layer.

The operating pattern underneath the headline

The useful way to read this story is not as a single announcement. It is a pressure test for how AI moves from a demo into an institution. That shift sounds abstract until it lands inside an actual workflow. Then the question becomes less glamorous and much more important: who owns the system, who pays for it, who audits it, who can stop it, and who knows when it is wrong.

For Google's AI-first developer platform, the visible headline is only the first layer. The deeper layer is agent permissions and product assembly workflows. That is the dependency serious teams should track. AI is no longer just an application that employees open in a tab. It is becoming a way to reorganize labor, capital, infrastructure, software delivery, robotics, and public policy. When a technology reaches that point, the deployment surface becomes as important as the model.

That is why this moment is awkward for executives. Most organizations learned to buy software by asking whether the tool improved an existing task. AI forces a different question: does the organization itself need to change before the tool can deliver value. A developer platform strategist can pilot a model in a week, but turning that model into durable leverage requires budget rules, procurement discipline, risk ownership, data boundaries, review paths, and a vocabulary for deciding where automation belongs.

The hidden risk is shipping agentic features before developers can reason about their contracts. It is tempting to treat that as a cultural problem or a communications problem. It is more than that. It is an architecture problem. Systems that lack clear boundaries eventually create trust failures, even when the underlying model is capable. Employees distrust invisible monitoring. Communities distrust opaque data-center deals. Developers distrust AI tools that create review debt. Customers distrust agents that cannot explain what changed. Regulators distrust compliance paperwork that does not connect to product behavior.

Why May 2026 feels different

The AI market has passed the stage where every new capability feels magical. That does not mean the technology is less important. It means the audience has become harder to impress. Buyers have seen copilots. Workers have seen productivity experiments. Developers have seen agent demos. Regulators have seen policy pledges. Infrastructure planners have seen data-center demand forecasts. The bar has moved from possibility to proof.

Proof is harder than a launch video. It asks whether the system works after onboarding, after a policy exception, after a security review, after a missed deadline, after the model changes, after a new compliance rule, after a customer complains, and after the first incident. That is the difference between a technology trend and an operating model.

The companies that understand this will not necessarily move slowly. They will move deliberately. They will start with narrower workflows, clearer owners, better evidence, and cleaner rollback. They will treat AI as a capability that has to be placed, not a magic layer to smear across every process. They will be willing to say no to impressive demos that do not have an accountability surface.

The companies that miss it will keep confusing adoption with transformation. They will count seats, prompts, generated files, and model calls. Those numbers can be useful, but they do not prove much by themselves. The better measurements are less flashy: error rate after review, time saved after correction, percentage of workflows with named owners, reduction in queue backlog, quality of audit trails, employee trust, power-delivery certainty, and the ability to explain why an AI-assisted decision happened.

The questions leaders should ask now

The practical questions are simple, but they are not easy.

- What is the first workflow where this actually changes behavior.

- Which human review step becomes more important rather than less important.

- Which dependency becomes more concentrated if adoption succeeds.

- What evidence would prove the system is working after the first month.

- What failure would make the organization pause expansion.

- Which team has the authority to say the system is not ready.

These questions matter because AI changes the boundary between tool and institution. A spreadsheet changed office work, but it did not usually act on behalf of the company. A traditional SaaS tool automated defined steps, but it did not usually reinterpret the task. AI systems can summarize, infer, recommend, generate, plan, and in some cases act. That range is useful precisely because it is dangerous to leave unmanaged.

What builders should copy

Builders should copy the discipline, not the hype. The lesson is to design AI systems around reviewable work. Make inputs visible. Make sources inspectable. Make confidence and uncertainty part of the interface. Preserve the trace. Let humans correct the system without fighting it. Keep permissions narrow until the system earns broader scope. Measure outcomes after review, not raw output before review.

For product teams, that means the boring features are the differentiators. Audit logs, access controls, version history, exportable evidence, permission boundaries, policy configuration, and cost attribution will decide which AI systems survive enterprise deployment. The model may open the door, but operations decide who stays in the building.

For leaders, the lesson is similar. AI strategy is not a deck about disruption. It is a portfolio of specific operating changes with named owners. Each one should state what task changes, what risk changes, what metric changes, and what human judgment remains essential. Without that specificity, the organization is not transforming. It is rehearsing a talking point.

Vibe-coded widgets are a preview of product assembly

The phrase sounds casual, but the implication is serious. If users and developers can describe interface components and workflow behaviors in natural language, software production becomes more compositional. That can lower barriers, but it also increases the importance of review, testing, accessibility, and security.

The operating pattern underneath the headline

The useful way to read this story is not as a single announcement. It is a pressure test for how AI moves from a demo into an institution. That shift sounds abstract until it lands inside an actual workflow. Then the question becomes less glamorous and much more important: who owns the system, who pays for it, who audits it, who can stop it, and who knows when it is wrong.

For Google's AI-first developer platform, the visible headline is only the first layer. The deeper layer is agent permissions and product assembly workflows. That is the dependency serious teams should track. AI is no longer just an application that employees open in a tab. It is becoming a way to reorganize labor, capital, infrastructure, software delivery, robotics, and public policy. When a technology reaches that point, the deployment surface becomes as important as the model.

That is why this moment is awkward for executives. Most organizations learned to buy software by asking whether the tool improved an existing task. AI forces a different question: does the organization itself need to change before the tool can deliver value. A developer platform strategist can pilot a model in a week, but turning that model into durable leverage requires budget rules, procurement discipline, risk ownership, data boundaries, review paths, and a vocabulary for deciding where automation belongs.

The hidden risk is shipping agentic features before developers can reason about their contracts. It is tempting to treat that as a cultural problem or a communications problem. It is more than that. It is an architecture problem. Systems that lack clear boundaries eventually create trust failures, even when the underlying model is capable. Employees distrust invisible monitoring. Communities distrust opaque data-center deals. Developers distrust AI tools that create review debt. Customers distrust agents that cannot explain what changed. Regulators distrust compliance paperwork that does not connect to product behavior.

Why May 2026 feels different

The AI market has passed the stage where every new capability feels magical. That does not mean the technology is less important. It means the audience has become harder to impress. Buyers have seen copilots. Workers have seen productivity experiments. Developers have seen agent demos. Regulators have seen policy pledges. Infrastructure planners have seen data-center demand forecasts. The bar has moved from possibility to proof.

Proof is harder than a launch video. It asks whether the system works after onboarding, after a policy exception, after a security review, after a missed deadline, after the model changes, after a new compliance rule, after a customer complains, and after the first incident. That is the difference between a technology trend and an operating model.

The companies that understand this will not necessarily move slowly. They will move deliberately. They will start with narrower workflows, clearer owners, better evidence, and cleaner rollback. They will treat AI as a capability that has to be placed, not a magic layer to smear across every process. They will be willing to say no to impressive demos that do not have an accountability surface.

The companies that miss it will keep confusing adoption with transformation. They will count seats, prompts, generated files, and model calls. Those numbers can be useful, but they do not prove much by themselves. The better measurements are less flashy: error rate after review, time saved after correction, percentage of workflows with named owners, reduction in queue backlog, quality of audit trails, employee trust, power-delivery certainty, and the ability to explain why an AI-assisted decision happened.

The questions leaders should ask now

The practical questions are simple, but they are not easy.

- What is the first workflow where this actually changes behavior.

- Which human review step becomes more important rather than less important.

- Which dependency becomes more concentrated if adoption succeeds.

- What evidence would prove the system is working after the first month.

- What failure would make the organization pause expansion.

- Which team has the authority to say the system is not ready.

These questions matter because AI changes the boundary between tool and institution. A spreadsheet changed office work, but it did not usually act on behalf of the company. A traditional SaaS tool automated defined steps, but it did not usually reinterpret the task. AI systems can summarize, infer, recommend, generate, plan, and in some cases act. That range is useful precisely because it is dangerous to leave unmanaged.

What builders should copy

Builders should copy the discipline, not the hype. The lesson is to design AI systems around reviewable work. Make inputs visible. Make sources inspectable. Make confidence and uncertainty part of the interface. Preserve the trace. Let humans correct the system without fighting it. Keep permissions narrow until the system earns broader scope. Measure outcomes after review, not raw output before review.

For product teams, that means the boring features are the differentiators. Audit logs, access controls, version history, exportable evidence, permission boundaries, policy configuration, and cost attribution will decide which AI systems survive enterprise deployment. The model may open the door, but operations decide who stays in the building.

For leaders, the lesson is similar. AI strategy is not a deck about disruption. It is a portfolio of specific operating changes with named owners. Each one should state what task changes, what risk changes, what metric changes, and what human judgment remains essential. Without that specificity, the organization is not transforming. It is rehearsing a talking point.

Google's advantage is distribution, not only model quality

Google can place AI across Android, Chrome, Workspace, Cloud, Search, and developer tools. That distribution is powerful because agents become more useful when they live near user context and platform permissions. The challenge is coherence. A scattered set of AI features will not feel like a platform. A consistent permission and action model might.

The operating pattern underneath the headline

The useful way to read this story is not as a single announcement. It is a pressure test for how AI moves from a demo into an institution. That shift sounds abstract until it lands inside an actual workflow. Then the question becomes less glamorous and much more important: who owns the system, who pays for it, who audits it, who can stop it, and who knows when it is wrong.

For Google's AI-first developer platform, the visible headline is only the first layer. The deeper layer is agent permissions and product assembly workflows. That is the dependency serious teams should track. AI is no longer just an application that employees open in a tab. It is becoming a way to reorganize labor, capital, infrastructure, software delivery, robotics, and public policy. When a technology reaches that point, the deployment surface becomes as important as the model.

That is why this moment is awkward for executives. Most organizations learned to buy software by asking whether the tool improved an existing task. AI forces a different question: does the organization itself need to change before the tool can deliver value. A developer platform strategist can pilot a model in a week, but turning that model into durable leverage requires budget rules, procurement discipline, risk ownership, data boundaries, review paths, and a vocabulary for deciding where automation belongs.

The hidden risk is shipping agentic features before developers can reason about their contracts. It is tempting to treat that as a cultural problem or a communications problem. It is more than that. It is an architecture problem. Systems that lack clear boundaries eventually create trust failures, even when the underlying model is capable. Employees distrust invisible monitoring. Communities distrust opaque data-center deals. Developers distrust AI tools that create review debt. Customers distrust agents that cannot explain what changed. Regulators distrust compliance paperwork that does not connect to product behavior.

Why May 2026 feels different

The AI market has passed the stage where every new capability feels magical. That does not mean the technology is less important. It means the audience has become harder to impress. Buyers have seen copilots. Workers have seen productivity experiments. Developers have seen agent demos. Regulators have seen policy pledges. Infrastructure planners have seen data-center demand forecasts. The bar has moved from possibility to proof.

Proof is harder than a launch video. It asks whether the system works after onboarding, after a policy exception, after a security review, after a missed deadline, after the model changes, after a new compliance rule, after a customer complains, and after the first incident. That is the difference between a technology trend and an operating model.

The companies that understand this will not necessarily move slowly. They will move deliberately. They will start with narrower workflows, clearer owners, better evidence, and cleaner rollback. They will treat AI as a capability that has to be placed, not a magic layer to smear across every process. They will be willing to say no to impressive demos that do not have an accountability surface.

The companies that miss it will keep confusing adoption with transformation. They will count seats, prompts, generated files, and model calls. Those numbers can be useful, but they do not prove much by themselves. The better measurements are less flashy: error rate after review, time saved after correction, percentage of workflows with named owners, reduction in queue backlog, quality of audit trails, employee trust, power-delivery certainty, and the ability to explain why an AI-assisted decision happened.

The questions leaders should ask now

The practical questions are simple, but they are not easy.

- What is the first workflow where this actually changes behavior.

- Which human review step becomes more important rather than less important.

- Which dependency becomes more concentrated if adoption succeeds.

- What evidence would prove the system is working after the first month.

- What failure would make the organization pause expansion.

- Which team has the authority to say the system is not ready.

These questions matter because AI changes the boundary between tool and institution. A spreadsheet changed office work, but it did not usually act on behalf of the company. A traditional SaaS tool automated defined steps, but it did not usually reinterpret the task. AI systems can summarize, infer, recommend, generate, plan, and in some cases act. That range is useful precisely because it is dangerous to leave unmanaged.

What builders should copy

Builders should copy the discipline, not the hype. The lesson is to design AI systems around reviewable work. Make inputs visible. Make sources inspectable. Make confidence and uncertainty part of the interface. Preserve the trace. Let humans correct the system without fighting it. Keep permissions narrow until the system earns broader scope. Measure outcomes after review, not raw output before review.

For product teams, that means the boring features are the differentiators. Audit logs, access controls, version history, exportable evidence, permission boundaries, policy configuration, and cost attribution will decide which AI systems survive enterprise deployment. The model may open the door, but operations decide who stays in the building.

For leaders, the lesson is similar. AI strategy is not a deck about disruption. It is a portfolio of specific operating changes with named owners. Each one should state what task changes, what risk changes, what metric changes, and what human judgment remains essential. Without that specificity, the organization is not transforming. It is rehearsing a talking point.

What to watch next

The next signal to watch is not whether the announcement gets another news cycle. It is whether the organization behind the story can turn the idea into a repeatable operating pattern. That means clear ownership, visible evidence, realistic economics, and a review layer that people actually use.

The market keeps rewarding the companies that tell the biggest AI stories, but the next phase will be less forgiving. Customers, employees, developers, regulators, and local communities are all learning to ask better questions. Does the system improve the work after review. Does it preserve enough evidence to inspect. Does it shift cost onto people who did not agree to pay. Does it create new concentration risk. Does it leave humans with better leverage or just more cleanup.

Those questions are healthy. They do not slow AI down in the long run. They make it survivable.

For builders, the assignment is to make the powerful thing legible. For executives, the assignment is to stop treating AI as a universal answer and start treating it as a set of specific operating changes. For policymakers, the assignment is to regulate the decision surface and the infrastructure dependency rather than chase every model headline. For workers and communities, the assignment is to demand clarity before the machinery becomes invisible.

AI is entering the phase where the surrounding system is the product. The winners will not be the ones with the most dramatic promise. They will be the ones that can show where intelligence enters the workflow, what it changes, who remains accountable, and why the result deserves trust.