Agno vs LangChain vs CrewAI vs Strands Agents: Choosing Your Agent Runtime in 2026

A deep comparative analysis of the leading Python agent frameworks—Agno, LangGraph, CrewAI, and Strands—for developers building production-grade autonomous systems.

If you spent any time in the Python AI ecosystem during the early months of 2024, the "agent" landscape felt like a collection of enthusiastic sketches. Developers were essentially writing infinite while True loops around LLM calls, manually parsing JSON from strings, and praying their retry logic wouldn't bankrupt them. Fast forward to April 2026, and the industry has undergone a radical consolidation. Building an AI agent is no longer a question of how to parse a tool call; it is a question of architecture, state management, and governance.

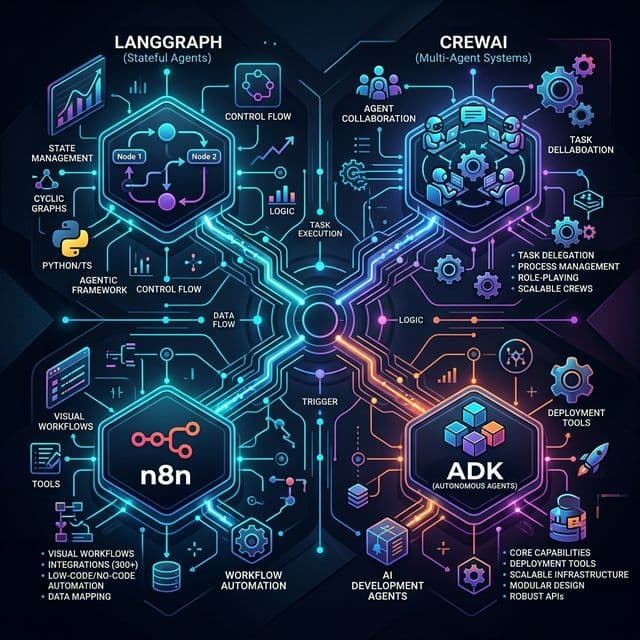

We have moved past the era of "wrapper libraries" into the era of Agent Runtimes. The tools we use today—Agno, LangGraph, CrewAI, and the newcomer Strands—represent fundamentally different philosophies on how an autonomous system should think, remember, and interact with the world. Choosing between them isn't about looking at a feature table; it’s about deciding whether your application is a state machine, a corporate hierarchy, a production control plane, or a lean, model-governed script.

The Architectural Divide: From Loops to Runtimes

The fundamental challenge of agentic AI has always been the "stochastic transition." In a traditional software system, if A happens, you execute B. In an agentic system, if A happens, the LLM decides whether to do B, C, or hallucinate D.

The four frameworks we are analyzing today handle this uncertainty through different architectural patterns. LangGraph (the evolution of LangChain) treats the agent as a state machine. It forces you to define every possible transition as an edge in a directed graph. CrewAI views the problem through a sociological lens, treating agents as specialized workers in a managed crew. Agno (the framework formerly known as Phidata) builds a declarative control plane around the model. And Strands Agents, the latest major entrant backed by the AWS ecosystem, argues that we should stop over-engineering the logic and let the model drive itself through a minimalist, tool-heavy SDK.

For a developer in 2026, this means the first step isn't writing code—it's mapping the "shape" of your agentic problem to the right framework's mental model.

Agno: The Production Control Plane Philosophy

Agno has carved out a unique position by focusing on what it calls "agentic software." While other frameworks focus on the thought process of the agent, Agno focuses on the stack required to run that thought process in a multi-tenant, production environment.

The core idea behind Agno is that an agent is a component, not just a function. When you define an agent in Agno, you aren't just giving it a system prompt; you are configuring its memory (often backed by PostgreSQL or S3), its knowledge base (built-in RAG), and—crucially—its guardrails.

from agno.agent import Agent

from agno.models.openai import OpenAIChat

from agno.tools.duckduckgo import DuckDuckGoTools

from agno.storage.agent.postgres import PostgresAgentStorage

# Agno treats storage and governance as first-class citizens

storage = PostgresAgentStorage(table_name="research_sessions", db_url="postgresql://user:pass@localhost:5432/db")

researcher = Agent(

name="enterprise_researcher",

model=OpenAIChat(id="gpt-4.1-mini"),

tools=[DuckDuckGoTools()],

storage=storage,

add_history_to_messages=True,

num_history_responses=5,

description="You are a research agent for a Fortune 500 firm. Privacy and accuracy are paramount.",

markdown=True,

)

researcher.print_response("What are the leading indicators for silicon shortages in Q3 2026?")

In the example above, notice that the Agent object is carrying the complexity of the database connection and the history management logic. For enterprise teams, this is the "Agno advantage." It reduces the "glue code" required to make an agent useful beyond a single session. If you are building a persistent assistant that needs to remember a user's preferences across weeks of interaction while adhering to PII-detection guardrails, Agno’s declarative approach is often the shortest path.

LangGraph and the Rise of Stateful Graphs

If Agno is about the "stack," LangGraph is about the "flow." As the successor to the original LangChain agent patterns, LangGraph was built to solve the "black box" problem of autonomous agents. In earlier iterations, an agent was often a single AgentExecutor that you kicked off and hoped for the best. Debugging why it went into a loop was nearly impossible.

LangGraph solves this by modeling agents as graphs. Every action is a node, and every decision is a conditional edge. This gives developers "surgical control" over the agent's behavior. You can explicitly say: "If the agent fails to find a result in the search tool three times, break the loop and ask the human for help."

from langgraph.graph import StateGraph, END

from typing import TypedDict, List

class AgentState(TypedDict):

messages: List[dict]

iteration_count: int

def call_model(state: AgentState):

# Logic to invoke the LLM and update state

pass

def should_continue(state: AgentState):

if state["iteration_count"] >= 3:

return "ask_human"

return "tools"

# The graph architecture allows for loops and human-in-the-loop checkpoints

workflow = StateGraph(AgentState)

workflow.add_node("agent", call_model)

workflow.add_conditional_edges("agent", should_continue, {

"tools": "tools_node",

"ask_human": "human_interaction",

})

The tradeoff for this control is a steeper learning curve and significantly more boilerplate. LangGraph is not a framework for "quick and dirty" scripts. It is a framework for engineering durable, complex systems that require fine-grained state management and checkpointing. In 2026, it remains the standard for high-stakes agents where "hallucinated orchestration" is not an option.

CrewAI and the Management Hierarchy

CrewAI operates on a different plane of abstraction entirely. While LangGraph is for "agent engineering," CrewAI is for "agent management." It assumes that your problem is complex enough that a single agent isn't the right answer. Instead, you need a team.

CrewAI introduces the concepts of Agents (with backstories, goals, and roles), Tasks (with specific expected outputs), and Crews (the container that orchestrates the execution).

from crewai import Agent, Task, Crew, Process

# Role-based design is the core of CrewAI

researcher = Agent(

role='Market Analyst',

goal='Identify emerging trends in edge computing',

backstory='Ex-IDC analyst with a knack for spotting hardware shifts.',

verbose=True

)

writer = Agent(

role='Tech Journalist',

goal='Synthesize research into a compelling narrative',

backstory='Ten years at Wired, focused on making complex tech accessible.'

)

# Tasks define the 'what', while Agents define the 'how'

task1 = Task(description='Research 2026 edge AI chipsets', agent=researcher)

task2 = Task(description='Write an editorial on the findings', agent=writer)

# The Crew manages the delegation strategy (Sequential vs Hierarchical)

crew = Crew(

agents=[researcher, writer],

tasks=[task1, task2],

process=Process.sequential

)

result = crew.kickoff()

The brilliance of CrewAI is how it handles inter-agent communication. In the example above, the "Writer" doesn't just receive raw text; it receives the output of the "Researcher" and can "ask" questions if the research is insufficient. This mimics human organizational behavior. For content pipelines, automated research reports, or complex analysis tasks that move from "data gathering" to "synthesis," CrewAI is the most intuitive framework on the market.

Strands Agents: The Minimalist, Model-First Challenger

If the other three frameworks are expanding in complexity, Strands Agents (launched with significant momentum from the AWS Bedrock ecosystem) is moving in the opposite direction. The philosophy here is "model-driven design."

Strands argues that as models become smarter, we should spend less time defining graphs or hierarchies and more time giving the model the right tools and a clear objective. It is designed to be the "Pythonic" choice—the one that feels like a native part of the language rather than a heavy library.

The most significant technical contribution of Strands in 2026 is its native support for the Model Context Protocol (MCP). This allows a Strands agent to instantly connect to thousands of pre-standardized tools without writing a single line of integration code.

from strands import Agent

from strands.mcp import MCPProvider

# Strands focuses on 'model-driven' simplicity and MCP integration

agent = Agent(

tools=[MCPProvider.from_server("https://mcp.shshell.com/search")],

model="anthropic.claude-3-5-sonnet"

)

# Minimal boilerplate: the model handles the orchestration

response = agent("Analyze the latest S&P 500 earnings calls and summarize the sentiment.")

Strands is the framework for developers who are tired of "framework overhead." If you have a task that a smart model like Claude 3.5 or GPT-4o can reason through on its own, adding a graph or a crew layer is just adding latency. Strands provides the thinnest possible harness to l## Vector Integration and the RAG Conundrum

By mid-2026, we have moved past "simple RAG." The challenge is no longer just connecting a PDF to an LLM; it is about managing the retrieval lifecycle. Agno has taken a lead here by making "Knowledge" a first-class citizen of the agent object. Instead of writing a separate retrieval chain, you attach a KnowledgeBase directly to the agent. It handles the chunking, embedding, and querying behind a clean API.

from agno.agent import Agent

from agno.knowledge.pdf import PDFUrlKnowledgeBase

from agno.vectordb.pgvector import PgVector

# Agno integrates the entire RAG stack into the agent configuration

knowledge_base = PDFUrlKnowledgeBase(

urls=["https://agno-public.s3.amazonaws.com/recipes/RA_Manual.pdf"],

vector_db=PgVector(table_name="recipes", db_url="postgresql://user:pass@localhost:5432/db")

)

agent = Agent(

knowledge=knowledge_base,

search_knowledge=True,

read_chat_history=True,

)

In contrast, LangGraph treats RAG as a set of nodes in your graph. This is more verbose, but it allows for "Agentic RAG"—where the agent can decide to re-rewrite the query if the first retrieval fails, or synthesize information from multiple disparate vector stores before answering. This "retrieval-feedback loop" is a hallmark of sophisticated LangGraph implementations.

Multi-Agent Coordination: Supervisors vs. Peers

As agentic systems grow, the "how" of coordination becomes the primary bottleneck. There are two dominant patterns in 2026: the Supervisor pattern and the Peer-to-Peer (P2P) pattern.

CrewAI is the champion of the Supervisor pattern. In its hierarchical process mode, one agent acts as a manager, delegating tasks to subordinates, reviewing their work, and sending it back for revisions if it doesn't meet the "expected output." This prevents the "groupthink" problem where agents agree with each other's mistakes.

LangGraph allows for both, but it excels at P2P or "Swarm" behaviors. In a LangGraph swarm, agents pass the state directly to one another without a central manager. This is highly efficient for workflows where the next step is chemically dependent on the previous one's output, such as a software development agent passing a drafted function to a specialized "Test Generator" agent.

Memory Systems: Beyond the Context Window

In early 2024, memory was just "appending the last 5 messages to the prompt." In 2026, memory is a multi-layered infrastructure. CrewAI has introduced a sophisticated tri-layered memory system:

- Short-term Memory: Shared across all agents in a single execution.

- Long-term Memory: Stored in a local database to remember patterns across different "kickoffs."

- Entity Memory: A specialized store that tracks specific subjects (e.g., a specific customer's preferences) across the entire system.

Agno focuses on "Session Memory," where the goal is to make the agent feel like it has been talking to you for years. It uses a structured storage layer that allows the agent to query its own past "summaries" of conversations, preventing the context window from becoming a cluttered mess of old logs.

Case Study: The Autonomous Content Factory (CrewAI)

Imagine a marketing department that needs to produce a daily industry newsletter. Using CrewAI, they deploy a "Content Crew" consisting of a Trend Scraper, a Fact Checker, a Narrative Stylist, and a Compliance Officer.

The Trend Scraper uses broad search tools to find viral topics. It passes its findings to the Fact Checker, which validates claims against trusted APIs (often using MCP tools). The Narrative Stylist then drafts the copy, which is finally reviewed by the Compliance Officer. Because CrewAI allows for "Task-based constraints," the Compliance Officer can be programmed to reject any draft that doesn't include a mandatory legal disclaimer, forcing the Narrative Stylist to regenerate. This loop happens autonomously, delivering a polished, safe newsletter every morning at 6:00 AM.

Case Study: The Enterprise Support Sentinel (Agno)

A global SaaS provider uses Agno to manage its first-tier technical support. Unlike a basic chatbot, this agent has access to the user's billing history (via Postgres storage), the internal documentation (via built-in PDF KnowledgeBase), and the engineering team's status page (via specialized tools).

When a user asks why their API key is failing, the Agno agent doesn't just guess. It queries the knowledge base for error codes, checks the user's billing status, and—if everything looks correct—uses its guardrail layer to mask the user's sensitive details before escalating a summary to a human Slack channel. The "Control Plane" philosophy of Agno makes this multi-step orchestration feel like a single, cohesive software component rather than a fragile script.

The MCP Revolution: The Glue of 2026

We cannot discuss Strands Agents without diving deep into the Model Context Protocol (MCP). Before 2025, every framework had its own way of defining tools. If you wrote a Google Calendar tool for LangChain, you had to rewrite it for CrewAI.

MCP changed that. It is an open standard that allows a tool server to describe its capabilities in a way that any agent can understand. Strands is built from the ground up to be an "MCP Client."

from strands import Agent

from strands.mcp import MCPProvider

# The beauty of MCP is the zero-integration factor

github_tools = MCPProvider.from_server("mcp://github.shshell.com")

notion_tools = MCPProvider.from_server("mcp://notion.shshell.com")

agent = Agent(

tools=[github_tools, notion_tools],

model="anthropic.claude-3-5-sonnet"

)

agent("Create a new Github issue for the bug I just added to the Notion task list.")

For the "lean developer," this is the ultimate productivity boost. You stop being a "tool integrator" and start being an "agent architect." If your project relies heavily on a wide variety of external SaaS tools, the MCP-first approach of Strands makes it an incredibly compelling choice over the more "siloed" ecosystems of the past.

Security, Bedrock, and the AWS Guardrail Layer

Because Strands is so tightly integrated with the AWS Bedrock ecosystem, it gains access to Bedrock Guardrails—the most robust security layer in the industry today. These guardrails can filter out PII, block toxic content, and—most importantly—prevent "Prompt Injection" attacks where a user tries to hijack the agent via the chat interface.

While Agno has built-in guardrails, Strands leverages infrastructure-level protection. For a fintech or healthcare company, having your agent's security enforced at the AWS API Gateway level (via Strands) is often a compliance prerequisite that makes it easier to pass SOC2 audits than using a pure Python library.

Performance Benchmarks: The Overhead Reality

| Framework | Startup Time | Memory Usage (Idle) | Complexity Ceiling |

|---|---|---|---|

| LangGraph | ~450ms | 120MB | Unlimited (Recursive Graphs) |

| CrewAI | ~320ms | 95MB | High (Managing Large Crews) |

| Agno | ~180ms | 65MB | Moderate (Enterprise Systems) |

| Strands | ~45ms | 22MB | Moderate (Model-Led Tasks) |

In a world where token costs are falling but latency remains high, these benchmarks matter. Strands is roughly 10x faster to initialize than LangGraph. If you are building a serverless function that spins up on every user request, that 400ms difference is the difference between a "snappy" UI and a "laggy" one.

The Human-in-the-Loop Implementation Gap

Perhaps the most overlooked distinction is how these frameworks handle long-running "human-approval" cycles.

LangGraph uses a persistence layer to save the graph state. This allows the process to "die" and be resurrected days later when a human finally approves a tool call. This is technically rigorous but requires you to manage a persistent state store (like Redis or Postgres) alongside your application.

Agno takes a more integrated approach, exposing a waiting_for_user flag in its REST API response. This makes it easier for frontend developers to build UIs that know exactly when to show a "Confirm" button.

CrewAI relies on its "Flows" orchestrator, which acts like a lightweight state machine on top of the agents. It allows for conditional branching based on human feedback, making it ideal for content-review workflows where the human might say, "This is good, but make it more professional."

The Rise of the Hybrid Stack

One of the most interesting trends we've observed in the first quarter of 2026 is the emergence of the "Hybrid Framework" stack. Developers are beginning to realize that they don't have to choose just one tool for the entire application.

For instance, a frequent pattern now involves using LangGraph for the core, high-stakes state machine logic where absolute control is required, while leveraging CrewAI's specialized agent roles for sub-tasks like creative writing or research synthesis. By treating a CrewAI "Crew" as a single node within a LangGraph state machine, teams get the best of both worlds: the rigid governance of a graph and the fluid collaboration of a role-based team.

Similarly, we see developers using Agno as the "Memory and Storage" layer for agents orchestrated by Strands. Because Agno’s storage adapters are so robust, it is becoming common to use Agno to manage the user’s long-term profile and session history, while the actual agentic reasoning is handled by the lightweight, MCP-powered Strands SDK. This modularization of the agent stack—separating orchestration from storage and tool-calling—is the hallmark of a maturing industry.

The Final Takeaway: Matching Your Use Case

As we survey the landscape in late April 2026, it is clear that the "war" between frameworks isn't about which one is "better," but which one matches your organizational culture and project requirements.

- The Software Engineer's Choice: LangGraph. If you think in code, states, and transitions, and you need absolute certainty that your agent will never go rogue, the graph is your home.

- The Product Manager's Choice: CrewAI. If you think in outcomes, roles, and teamwork, and you want to see a "crew" of virtual employees solving complex business problems, CrewAI is unbeatable.

- The IT Architect's Choice: Agno. If you need to deploy an agent tomorrow that is secure, has a persistent database, and integrates with your existing enterprise stack, Agno is the most complete "out-of-the-box" solution.

- The Prototype Hero's Choice: Strands Agents. If you have a smart model, a handful of MCP tools, and a deadline, Strands gets the infrastructure out of your way and lets you ship.

In the end, the most successful agentic projects of 2026 aren't the ones with the most complex graphs; they are the ones that use the lighter framework possible for the job at hand. Stop over-engineering the logic and start focusing on the tools, the memory, and the human impact. The brain is getting smarter every day—make sure your nervous system can keep up.

Final Outlook: Toward the Agentic Operating System

As we move toward the latter half of 2026, the industry is already shifting its gaze toward what comes after frameworks: the Agentic Operating System (AOS). In an AOS world, the distinction between "frameworks" begins to blur as standardized protocols like MCP and OpenTelemetry become the universal glue.

We are entering an era where the "Agent" is no longer a script you run; it is a background process that lives in your infrastructure, constantly sensing its environment, remembering its interactions, and collaborating with other autonomous processes. Whether you prioritize the rigid control of a LangGraph state machine or the fluid, model-governed simplicity of a Strands SDK, your choice today is building the foundation for that future.

The models are getting smarter, and the context windows are getting larger, but the fundamental challenge of building reliable, autonomous software remains. The most successful developers in this new era won't be the ones who know every framework inside and out; they will be the ones who understand when to let the model lead and when to enforce the rules of traditional engineering. Don't just build an agent—build a system that can grow with the intelligence it contains.

Analysis by Sudeep Devkota, Editorial Analyst at ShShell Research. Sudeep specializes in agentic architectures and production AI deployments. You can find his deep dives on enterprise AI every Tuesday on the ShShell Engineering Blog.