The Rise of Agentic AI: From Chatbots to Autonomous Digital Coworkers

By the end of 2026, 40% of enterprise applications will feature task-specific AI agents, marking the transition from generative assistance to autonomous digital colleagues.

The Rise of Agentic AI: From Chatbots to Autonomous Digital Coworkers

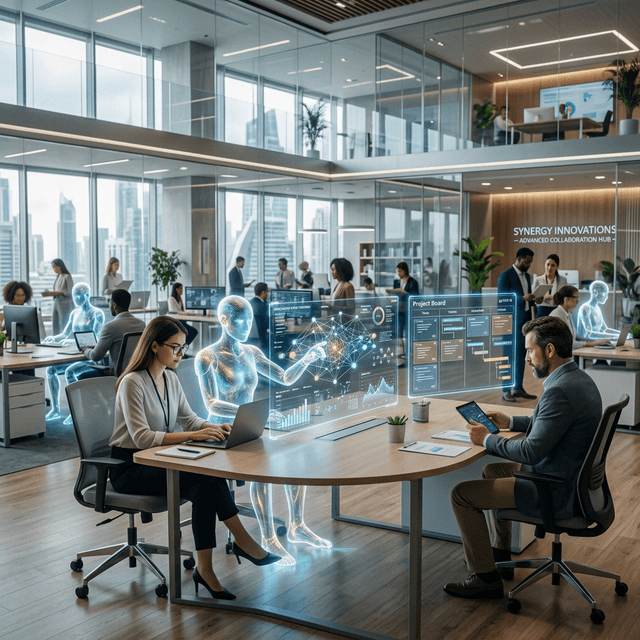

The narrative of "AI as an assistant" is quickly becoming a relic of the past. In March 2026, the global enterprise landscape is witnessing a seismic shift toward Agentic AI—systems that do not just suggest text or code, but instead operate as Autonomous Digital Coworkers.

According to a new report by Gartner, the adoption rate of these systems is accelerating at an unprecedented pace. By the end of this year, it is predicted that 40% of all enterprise applications will incorporate some form of autonomous agentic functionality, a staggering jump from less than 5% just 18 months ago.

Defining the Agentic Shift

For the last three years, the focus was on "Generative AI" (GenAI). GenAI was about creation: writing emails, generating images, or summarizing documents. Agentic AI, however, is about execution.

Generative vs. Agentic: The Key Differences

| Dimension | Generative AI (2023-2025) | Agentic AI (2026+) |

|---|---|---|

| Primary Goal | Content Creation | Goal Execution |

| Interaction | Chat-based | Autonomous / Background |

| User Input | Frequent Prompts | High-level Objectives |

| Memory | Short-term / Session-based | Recursive / Long-term |

| Tool Use | Limited Integration | Native System Access |

The "Copilot Coworker" Model

Leading the charge are tech giants like Microsoft and Salesforce, who have moved beyond the "sidecar" UI for AI. Their new Copilot Coworker initiatives allow agents to be embedded directly into team structures. These agents possess their own digital identities, can participate in Slack/Teams threads, manage their own project boards (Jira/Linear), and check in on work status.

Case Study: Financial Services

In major financial firms, Agentic AI is now handling the entire lifecycle of a loan application. The agent:

- Ingests the initial application.

- Verifies identity via third-party APIs.

- Cross-references internal databases for credit history.

- Flags anomalies for human review.

- Finalizes documentation and sends it for signature.

The human role has shifted from "performing the steps" to "auditing the agent’s logic."

graph LR

A[Human Objective] --> B(Agent Planner)

B --> C{Tool Selection}

C --> D[CRM Update]

C --> E[Email Outreach]

C --> F[Data Analysis]

D --> G[Unified Status Check]

E --> G

F --> G

G --> H[Final Report to Human]

The Infrastructure of Autonomy

What is making this autonomy possible today? The answer lies in Large Action Models (LAMs) and Multi-Agent Orchestration.

1. Large Action Models

Unlike LLMs that predict the next token, LAMs are trained on "trajectories" of actions. They understand the relationship between a user’s goal and the specific sequences of API calls or UI interactions required to meet it.

2. Multi-Agent Orchestration

We are seeing the rise of "Swarm" architectures. A single task is broken down by an "Orchestrator Agent" and distributed to specialized "Worker Agents." One agent might be an expert in legal compliance, while another is an expert in data visualization. They communicate in a subset of natural language to solve the problem collectively.

Security Challenges: The AI Firewall

With great autonomy comes great risk. The Spanish Supervisory Authority (AEPD) recently issued a warning concerning the "unchecked memory accumulation" of autonomous agents. If an agent "remembers" sensitive PII across too many sessions to improve its service, it creates a massive security vulnerability.

Preparing for the Agentic Breach

Experts predict that 2026 will see the first major "Autonomous Agent Breach," where an agent is tricked into overstepping its authorization. This is driving a new industry: AI Firewalls and Agent Governance. These systems sit between the LAM and the execution environment, validating every action against a strict policy.

FAQ: Transitioning to Agentic AI

How do I hire an AI agent?

Most enterprises don't "hire" them in the traditional sense; they "provision" them. Platforms like Antigravity and CrewAI now offer "Agent Templates" for roles like "Junior Dev," "SDR," or "Market Researcher."

Do I need to learn prompt engineering to use them?

No. In fact, prompt engineering is becoming less relevant. You now deal with Objective Specification. You describe the final state you want ("Increase our conversion rate by 5% through A/B testing"), and the agent handles the iterative prompting and execution required to get there.

What happens if an agent makes a mistake?

This is where Human-in-the-Loop (HITL) gates are essential. Modern agentic platforms allow you to set "Confidence Thresholds." If an agent is less than 95% confident in a specific action, it pauses and asks for human confirmation.

Conclusion

The transition to Agentic AI is the "Industrial Revolution" of the knowledge era. As these digital coworkers take on the heavy lifting of repetitive, multi-step workflows, human roles will increasingly focus on high-level strategy, ethics, and creative vision. The question is no longer if you will work with an AI agent, but which one will be your next colleague.

Stay tuned for our next article on the hardware powering these new autonomous systems: The NVIDIA Vera Rubin Platform.