The $1.25 Trillion Colossus: Inside SpaceX-xAI's Plan to Put Data Centers in Orbit

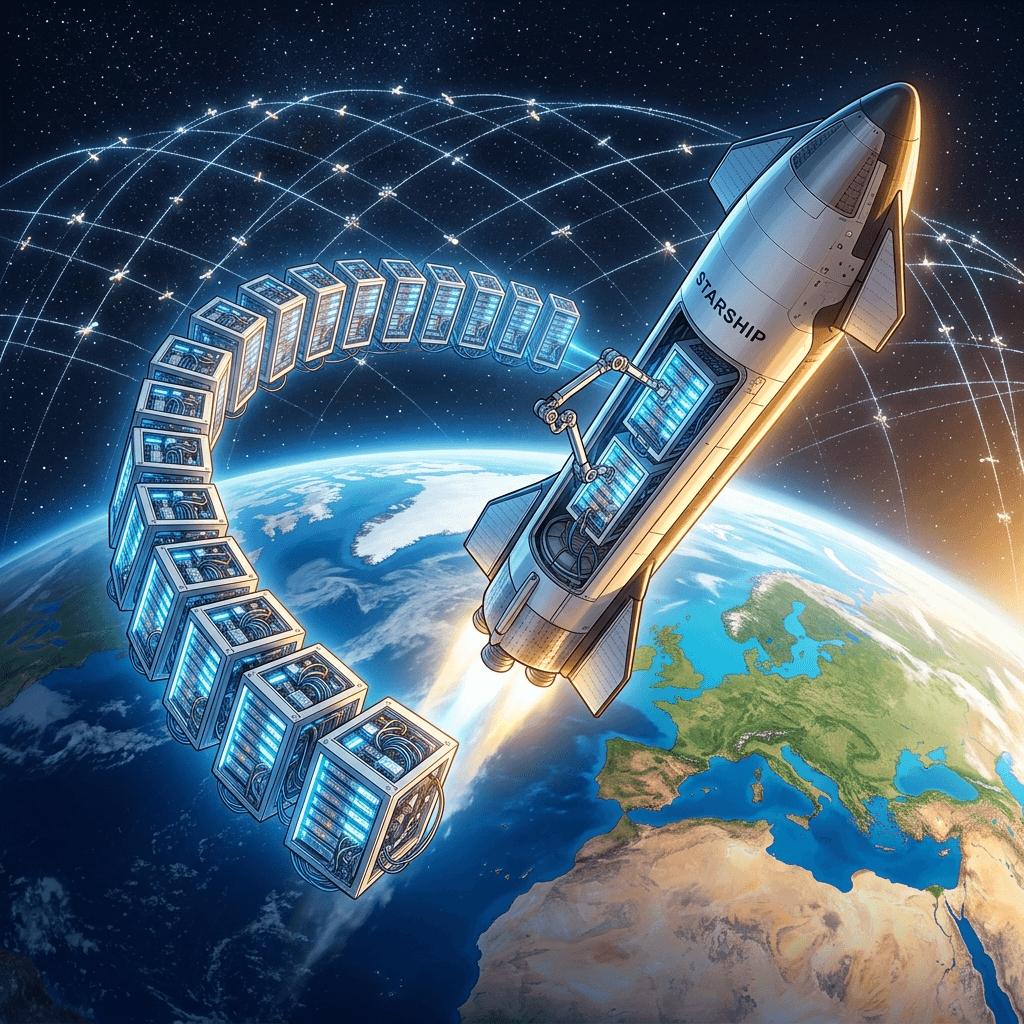

SpaceX acquired xAI for $250 billion, forming a $1.25 trillion vertically integrated AI-space empire. Now they want to put data centers in space. Deep analysis of the merger and its implications.

The Deal That Bent Reality

When the announcement crossed the wire on February 2, 2026—SpaceX acquiring xAI in a $250 billion all-stock transaction—the financial world's reaction split cleanly into two camps. The first camp applied traditional valuation frameworks, concluded that paying a quarter of a trillion dollars for a company whose primary asset was a large language model called Grok was absurd, and moved on. The second camp looked at who was selling, who was buying, and what the combined entity would be capable of, and arrived at a different conclusion: this was the most strategically coherent megadeal in the history of technology.

Both camps were right. The deal was financially absurd by any conventional metric. It was also, in the context of Elon Musk's long-stated ambition to build a vertically integrated civilization-scale technology platform, completely inevitable. SpaceX and xAI were already sharing office space in Austin. They already shared a founder, a board member, and a chief of staff. The acquisition formalized a relationship that had been operationally merged for months.

The combined entity, now valued at approximately $1.25 trillion, controls the world's largest rocket launch network, the world's largest satellite internet constellation, one of the world's most advanced AI model families, and—in its most audacious strategic bet—the infrastructure to build data centers in outer space.

Why Space Data Centers Are Not Science Fiction

The idea of orbiting compute infrastructure sounds like it belongs in a 2035 strategy deck, not a 2026 operational plan. But the SpaceX-xAI merger creates a unique convergence of capabilities that makes space-based computing technically plausible, if not yet economically proven.

Start with the problem that space data centers would solve. Terrestrial data centers face three escalating constraints. Land: the construction of hyperscale data centers requires hundreds of acres of flat terrain with access to fiber optic networks and electrical substations, creating intense competition for suitable sites in Northern Virginia, the American Southwest, and Nordic countries. Power: a single hyperscale data center consumes between 100 and 300 megawatts of electricity—equivalent to a small city—and the aggregate power demand of global AI compute is now competing with residential and industrial consumption for grid capacity. Cooling: GPU-dense AI training clusters generate extraordinary heat loads, requiring either massive chillers (consuming additional power) or liquid cooling infrastructure (requiring water resources that are scarce in the desert environments where cheap solar power is available).

Space eliminates two of these three constraints. In low Earth orbit, the vacuum of space provides passive cooling through radiative heat dissipation—no water, no chillers, no additional power consumption for thermal management. And land area is, by definition, unlimited. The remaining constraint—power—is addressed by solar panels, which operate at significantly higher efficiency above the atmosphere where they are not attenuated by clouds, dust, or atmospheric absorption.

The missing piece has always been launch economics. Putting hardware in orbit costs money—historically, between $10,000 and $50,000 per kilogram to low Earth orbit. At those prices, shipping a single server rack (roughly 1,000 kilograms) to space would cost between $10 million and $50 million, making space compute roughly a thousand times more expensive than terrestrial alternatives.

SpaceX's Starship changes this equation. With a target cost of approximately $100 per kilogram to LEO at full reusability and high launch cadence, Starship reduces the cost of orbiting a server rack to roughly $100,000. That is still more expensive than a terrestrial rack deployment, but it is within the same order of magnitude—and it eliminates the land and cooling costs that make terrestrial data center construction increasingly prohibitive.

graph TD

A[SpaceX-xAI Merger] --> B[Launch Infrastructure]

A --> C[Satellite Network]

A --> D[AI Model Family]

B --> E[Starship: $100/kg to LEO]

C --> F[Starlink: Global Connectivity]

D --> G[Grok: Inference Engine]

E --> H[Orbital Data Centers]

F --> H

G --> H

H --> I[Passive Cooling: Zero Power]

H --> J[Solar Power: 40% Higher Efficiency]

H --> K[Unlimited Space: No Land Constraints]

I --> L[Competitive Compute Cost]

J --> L

K --> L

L --> M[AI Training at Scale]

L --> N[Global Low-Latency Inference]

Grok as the Nervous System

While the space data center ambition captures imagination, the more immediate impact of the merger is the integration of xAI's Grok model across SpaceX's existing operational infrastructure. Grok is being deployed in three operational domains that leverage SpaceX's unique data advantages.

In telemetry analysis, Grok processes real-time sensor data from Falcon 9 and Starship launch vehicles. Each rocket generates terabytes of telemetry during flight—vibration sensors, temperature probes, pressure transducers, GPS coordinates, inertial measurement units—flowing into ground stations at millisecond intervals. Previously, anomaly detection relied on threshold-based alerts and post-flight statistical analysis. Grok's integration enables predictive anomaly detection: the model identifies patterns in sensor data that presage mechanical failures before they manifest as threshold violations, enabling pre-emptive course corrections or controlled abort sequences.

In Starlink network optimization, Grok manages the constellation's increasingly complex mesh routing. Starlink now operates over 7,000 satellites in low Earth orbit, each maintaining laser interconnects with neighboring satellites and ground-to-satellite radio links with millions of user terminals. Optimizing bandwidth allocation, managing satellite handoffs as terminals move between coverage areas, and coordinating collision avoidance maneuvers generates an optimization problem of extraordinary dimensionality. Grok's ability to process multimodal inputs—orbital mechanics data, atmospheric conditions, network traffic patterns, user density maps—enables real-time optimization that deterministic algorithms cannot match.

In customer operations, Grok has replaced the previous chatbot infrastructure for Starlink customer support, reducing average resolution time by approximately forty percent according to internal metrics shared during the merger announcement. This application, while less technically glamorous than telemetry analysis, generates immediate revenue impact by reducing the headcount required for the customer service organization that supports Starlink's estimated forty million subscribers.

The Vertical Integration Thesis

The strategic logic of the SpaceX-xAI merger becomes clearest when viewed through the lens of vertical integration. Most technology companies operate at one or two layers of the infrastructure stack. Google builds models and cloud services but buys its hardware from third parties and leases data center space. Microsoft builds software and cloud services but relies on partners for networking infrastructure. Amazon builds cloud services and logistics networks but sources its computing hardware from manufacturers.

SpaceX-xAI operates at every layer simultaneously:

| Stack Layer | SpaceX-xAI Asset | Competitor Equivalent |

|---|---|---|

| Hardware manufacturing | Raptor engines, satellite hardware | N/A (no AI company builds its own rockets) |

| Launch infrastructure | Falcon 9, Starship | No competitor has launch capability |

| Communications network | Starlink constellation | AWS Ground Station, Azure Orbital (narrow) |

| Data center infrastructure | Existing ground facilities + orbital plans | AWS, Azure, GCP |

| AI model development | Grok model family | GPT, Claude, Gemini |

| End-user applications | X platform integration, Starlink support | ChatGPT, Claude.ai, Google Search |

| Recurring revenue | Starlink subscriptions ($40M+ users) | Cloud/SaaS subscriptions |

No other entity on Earth controls this breadth of the technology stack. The closest historical analog is AT&T during the Bell System era—a company that manufactured telephones (Western Electric), operated the research lab that invented the transistor (Bell Labs), owned the continental communications network, and served every telephone customer in America. The Bell System was eventually broken up by antitrust regulators who judged its vertical integration to be anticompetitive. Whether SpaceX-xAI faces similar regulatory scrutiny remains an open question that antitrust scholars are already debating.

The Tesla-Optimus Connection

The merger announcement explicitly referenced integration with Tesla's operations, particularly the Optimus humanoid robot program. Grok is being adapted as the cognitive backbone for Optimus robots deployed in Tesla manufacturing facilities, providing the natural language understanding, spatial reasoning, and task planning that factory-floor robots require.

This creates a scenario in which a single AI model family—Grok—operates across rockets (SpaceX telemetry), satellites (Starlink optimization), social media (X platform), automobiles (Tesla FSD), humanoid robots (Optimus), and potentially orbital data centers. The data flywheel this creates is staggering. Each operational domain generates unique training data that improves the model's performance across all domains. A failure mode detected in Starship telemetry that resembles a pump cavitation pattern observed in Tesla manufacturing feeds back into the model's general understanding of fluid dynamics, improving anomaly detection everywhere simultaneously.

The competitive implications for standalone AI companies are sobering. An AI lab that trains models on text and code competes with Grok on that narrow dimension. But Grok learns simultaneously from rocket launches, satellite networks, manufacturing processes, autonomous driving, humanoid locomotion, and social media interactions. The data diversity is a structural advantage that no amount of funding can replicate—because the data comes from physical systems that only SpaceX-xAI operates.

The Financial Architecture

The all-stock nature of the acquisition reveals important details about the financial engineering behind the deal. No cash changed hands. SpaceX issued new shares valued at $250 billion to xAI's existing shareholders, primarily Elon Musk himself, who controlled the majority of both entities. The circular nature of this transaction—Musk selling shares in a company he controls to another company he controls, in exchange for shares in the acquiring company—raised immediate scrutiny from securities regulators and corporate governance advocates.

The SEC is reportedly reviewing the transaction for potential self-dealing concerns, particularly regarding the valuation methodology used to arrive at xAI's $250 billion price tag. Independent valuations of xAI prior to the acquisition ranged from $45 billion (based on revenue multiples) to $80 billion (based on comparable AI startup valuations). The $250 billion figure appears to reflect a "strategic premium" that some analysts describe as Musk's personal assessment of xAI's future value within the integrated SpaceX ecosystem.

The planned IPO of the combined entity adds another layer of financial complexity. Investment banks have valued the potential IPO at between $1.5 trillion and $2 trillion, which would make it the largest public offering in history—surpassing Saudi Aramco's 2019 IPO. The revenue profile is compelling: Starlink alone generates recurring revenue from over forty million subscribers, providing the kind of predictable cash flow that public market investors prize. The AI division's contribution is harder to value but provides the growth narrative that commands premium multiples.

What the Rest of the Industry Sees

For every other company building AI infrastructure, the SpaceX-xAI merger represents an existential strategic challenge. The hyperscale cloud providers—AWS, Azure, and GCP—have spent decades building terrestrial data center empires. Their combined capital expenditures on data center construction now exceed $150 billion annually. The possibility that a competitor could bypass terrestrial infrastructure entirely, building compute capacity in orbit at potentially lower marginal cost per watt, threatens the fundamental economics of the cloud computing industry.

The more immediate competitive concern is access to launch capability. If SpaceX prioritizes its own orbital data center deployments over commercial launch contracts—or simply charges competitors higher rates for launch services—the company gains an infrastructure advantage that cannot be offset by engineering innovation or capital investment. You cannot build a rocket fast enough to catch up with a company that already launches more payload to orbit than every other launch provider combined.

This concern has already prompted competitor activity. Amazon's Project Kuiper satellite constellation, Blue Origin's New Glenn launch vehicle, and Rocket Lab's Neutron program are all partly motivated by the need to establish independent launch and space-based computing capabilities that do not depend on SpaceX infrastructure. The race to build space-based compute is no longer speculative—it is an active competitive battlefront with billions of dollars in capital allocation at stake.

The Governance Question Nobody is Answering

A $1.25 trillion entity that controls global internet access (Starlink), possesses advanced AI capabilities (Grok), operates the world's most capable launch infrastructure (Starship), and plans to deploy computing infrastructure beyond the reach of any national jurisdiction raises governance questions that existing regulatory frameworks are not equipped to answer.

Who has jurisdiction over a data center in low Earth orbit? If compute operations are conducted above international waters, which country's data protection regulations apply? If Grok processes the personal data of Starlink subscribers while running on orbital hardware, does GDPR apply? Does CCPA? Does any law?

These questions are not hypothetical. They will require answers before the first orbital server rack is powered on. The legal scholars, regulators, and policy advocates who will shape those answers are only now beginning to grapple with the implications of a company that operates simultaneously in aerospace, telecommunications, artificial intelligence, and automotive manufacturing—and may soon operate in a domain where terrestrial law does not clearly reach.

The Technical Challenges Nobody Talks About

The orbital data center vision, for all its strategic elegance, faces engineering challenges that the merger announcement conspicuously underemphasized. Radiation hardening is perhaps the most significant. Computing hardware in low Earth orbit is exposed to ionizing radiation levels that would be catastrophic for consumer-grade GPUs. Cosmic rays and solar particle events cause single-event upsets—random bit flips in memory that corrupt calculations, crash processes, and in worst cases permanently damage transistors. The International Space Station experiences measurable radiation-induced computing errors daily despite shielding that adds significant mass and cost.

AI training workloads are particularly sensitive to bit flips because they involve extended numerical computations where a single corrupted gradient can propagate errors across an entire model. Radiation-hardened processors exist—they power satellites, Mars rovers, and military systems—but they are typically two to three generations behind consumer hardware in performance and cost ten to a hundred times more per unit of compute. Running frontier AI training on radiation-hardened hardware would negate much of the economic advantage that orbital deployment is supposed to provide.

SpaceX engineers have proposed an alternative approach: massive redundancy combined with error-correcting codes. Rather than hardening individual processors, the orbital data center would deploy commodity hardware in triplicate, running each computation on three independent processing units and voting on the result. This approach is computationally wasteful—it triples the hardware requirement for any given workload—but it may be cheaper than radiation hardening if Starship launch costs reach the projected $100-per-kilogram target.

Thermal management, too, is more complex than the "passive cooling in space" narrative suggests. Radiative cooling in vacuum is effective but slow. A GPU cluster generating kilowatts of heat needs large radiator surfaces to dissipate that energy into space, and the radiators themselves add mass, complexity, and potential failure points. The sunny side of an orbital platform can reach temperatures exceeding 120°C while the shady side drops below -150°C, creating thermal cycling stresses that accelerate hardware degradation. Managing these gradients across a data center-scale installation is an engineering problem without precedent.

Maintenance is the third unsolved challenge. Terrestrial data centers employ technicians who can swap failed drives, replace faulty network cards, and upgrade hardware on an ongoing basis. An orbital data center has no such luxury. Every hardware failure that cannot be resolved remotely requires either robotic repair (using systems that do not yet exist at the required reliability level) or a Starship visit to physically swap components—a process that, even at reduced launch costs, would be orders of magnitude more expensive than walking a technician to a server rack.

The Latency Problem and the Physics of Distance

For AI inference—serving user queries in real time—orbital data centers face an even more fundamental constraint: the speed of light. A data center in low Earth orbit at 550 kilometers altitude adds approximately 3.7 milliseconds of round-trip latency for the ground-to-satellite-to-ground communication path. This is in addition to any processing latency and does not account for the inter-satellite laser links that may be required to route data between the nearest Starlink satellite and the orbital data center's actual location.

For many AI inference tasks, 4-10 milliseconds of additional latency is commercially acceptable. Chatbot conversations, document summarization, and code generation are not latency-sensitive enough for users to notice a small delay. But for applications that require sub-millisecond response times—high-frequency trading systems, real-time autonomous vehicle decision-making, interactive gaming AI—orbital compute is simply too far away.

This constrains the orbital data center's role to specific workload types: batch training (where latency is irrelevant), bulk inference (where throughput matters more than per-request latency), and disaster recovery (where orbital placement provides geographic independence from terrestrial natural disasters and geopolitical disruptions). The vision of orbital compute as a general-purpose replacement for terrestrial cloud infrastructure is limited by physics in ways that no amount of engineering can overcome.

National Security and the Weaponization Question

A company that controls global internet access, advanced AI capabilities, autonomous rocket launch infrastructure, and orbital computing platforms presents national security considerations that transcend commercial competition. The U.S. Department of Defense is simultaneously one of SpaceX's largest customers (through launch contracts and Starlink military deployments) and one of its most concerned overseers.

The concern is not that SpaceX-xAI would deliberately weaponize its infrastructure—it is that the infrastructure is inherently dual-use. Starlink satellites can provide internet connectivity to humanitarian operations and military command-and-control systems with equal ease. Grok's telemetry analysis capabilities, developed for rocket launches, could be adapted for missile tracking or autonomous weapons targeting. Orbital data centers, positioned beyond the reach of terrestrial law enforcement, could process intelligence data for any customer willing to pay.

The Committee on Foreign Investment in the United States (CFIUS) has reportedly initiated an informal review of the SpaceX-xAI merger's national security implications, though no formal investigation has been announced. The review is complicated by the fact that Elon Musk holds security clearances related to SpaceX's classified government contracts—clearances that create both access to sensitive information and obligations to protect it.

International reactions have been equally concerned. The European Union's Commissioner for Internal Market has publicly questioned whether a single private entity should control critical infrastructure spanning communications, artificial intelligence, and space access. China's Ministry of Industry and Information Technology issued a statement describing the merger as "a monopolistic consolidation of strategic technologies" and accelerating its own domestic alternatives across all three domains.

The $1.25 trillion colossus continues assembling itself, one Starship launch at a time. The rest of the technology industry watches from the ground, wondering whether the future of computing is above them—literally.