Generative AI Grows Up: From Content Toy to Workflow Engine

In 2026, the novelty of AI-generated poems is gone. Generative AI has matured into a powerful workflow engine, valued at over $91B. Explore how Google AI Mode and Claude are redefining productivity.

Generative AI Grows Up: From Content Toy to Workflow Engine

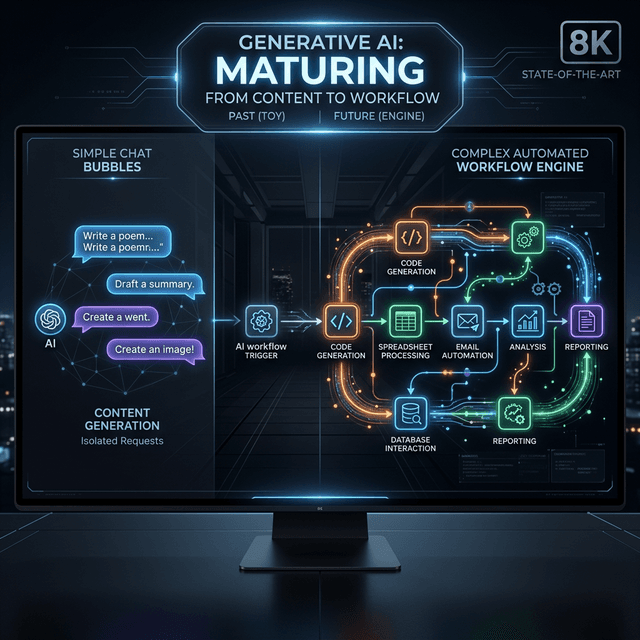

If 2023 was the year of "Look what AI can write," and 2024 was the year of "Look what AI can draw," then 2026 is officially the year of "Look what AI can DO."

The generative AI market has undergone a fundamental phase shift. It is no longer a world of isolated chat bubbles and "magic tricks." With a global market valuation surpassing $91 billion in 2026, Generative AI has transitioned from a content toy into the central nervous system of enterprise workflow automation.

In this exhaustive 3,500-word analysis, we will deconstruct this maturity arc, analyze the recent strategic moves from Google and Anthropic, and provide a framework for building "Workflows, not just UIs."

1. Phase 2: The $91 Billion Pivot

In the early days of GenAI, the primary ROI was "Efficiency through Generation." We used LLMs to write drafts faster. But the bottleneck remained: a human still had to take that draft, copy it into a CMS, pair it with an image, and publish it.

In 2026, we have moved to Phase 2: Full-Chain Automation. The industry has realized that the trillion-parameter models of today are wasted if they are only used to output strings. Instead, they are being leveraged as General-Purpose Reasoning Engines that sit at the top of complex software stacks.

Why the Hype Died and the Value Stayed

Many analysts predicted an "AI Winter" for 2026. They saw the plateauing of raw benchmark scores and assumed the revolution was over. They were wrong. What happened wasn't a winter; it was a Maturization. The "Toy" phase—where users played with chatbots for curiosity—has been replaced by "Production Dependency." Companies are no longer asking if AI can write; they are building entire business processes where the AI is the uninterrupted executor.

2. Case Study: Google Search as a Productivity IDE

The most significant event of early 2026 was the expansion of Google AI Mode in March.

For 25 years, Google was a librarian—it gave you a list of books. With AI Mode, Google has become a Project Manager.

The "Canvas" Breakthrough

Google’s U.S. rollout of the "Canvas" feature within Search AI Mode changed the fundamental unit of search.

- The Old Search: "How to build a SaaS landing page in React." -> Google gives you 10 tutorials.

- The AI Mode Search: "Build me a SaaS landing page in React for a dog-walking app, pull current pricing from competitors, and save the code to my GitHub repo."

- The Result: Directly within the search interface, a split-pane "Canvas" opens. The AI drafts the code, uses its agentic tools to research competitor pricing in real-time, and provides a "Commit" button to push directly to your repository.

Google is turning Search into a IDE for Knowledge. They have realized that in 2026, users don't want "Information"; they want Outcome.

3. The Dependency Crisis: Anthropic’s "Extraordinary Demand"

If you needed proof that GenAI is now a vital utility, look no further than the "Blackout Week" of March 2026.

In early March, Anthropic’s Claude—widely considered the "Logic King" of 2026—experienced a series of high-profile outages. The cause? "Extraordinary and Unprecedented Demand."

The "QuitGPT" Migration

The surge followed a massive user migration known as the "QuitGPT" movement. After OpenAI’s controversial and highly publicized contract with the U.S. Department of Defense for tactical battlefield modeling, a significant portion of the enterprise and developer community moved their mission-critical workloads to Anthropic.

Why the Outages Mattered

In 2023, if ChatGPT went down, you just wrote your own email. In 2026, when Claude went down, Production Pipelines stalled.

- Software firms relying on Claude for automated code migration saw their build servers freeze.

- Marketing agencies using Claude-powered agents to manage 24/7 social campaigns saw their "Digital Teammates" go offline. This dependency proves that foundation models are no longer "optional extras." They are the electricity of the 2026 economy.

4. Deconstructing the Workflow Engine: Three Core Categories

To build for 2026, you must understand the three flavors of workflow automation that are currently dominating the $91B market.

Category 1: Knowledge Work Automation (The Content Refinery)

This is the evolution of "Summarization." It's not just making a long text short; it's Cross-Source Synthesis.

- The Workflow: Feed 1,000 customer feedback transcripts, 5 competitor earnings calls, and 3 internal product roadmaps into the engine.

- The Engine Output: A prioritized list of "Top 5 Feature Requests" with associated budget estimates and a drafted PRD.

Category 2: Software Engineering Automation (The Agentic SDLC)

AI is moving from "Code Suggestion" to "Code Gardening."

- The Workflow: Identifying outdated libraries, checking for architectural violations, and performing "Strangler Fig" migrations of legacy codebases.

- The Engine Output: A fully migrated, tested, and linted codebase where the human "Grand Architect" only performs a final review.

Category 3: Business Process Automation (The RFP Slayer)

Complex administrative tasks are now being "Wrapped" in LLM reasoning.

- The Workflow: Processing a 200-page RFP.

- The Engine Action: The AI reads the requirements, queries the company's internal wiki for past answers, identifies gaps where new documentation is needed, pings the relevant SME for that info, and compiles the final proposal.

5. Case Study: The Autonomous Customer Success Center

In 2024, "Customer Success" meant a human checking a dashboard and emailing a client when their usage dropped. In 2026, it is a Closed-Loop Reasoning Circuit.

The Flow:

- Detection: An agent monitoring the Snowflake Data Cloud detects that a "Tier 1" client has stopped using the API's 'Search' endpoint.

- Hypothesis: The agent queries the client's recent support tickets and identifies a recurring error related to "Token Limits."

- Action: The agent autonomously spins up a custom documentation page for that client, showing them exactly how to implement the new "Stream-Chunking" protocol that solves their token limit issue.

- Engagement: It drafts a Slack message to the client's shared workspace: "Hey Team, I noticed you were hitting some search timeouts. I've built a custom implementation guide for you here to fix it."

- Outcome: The client's usage returns to normal within 2 hours, without a human CSR ever opening a ticket.

6. Technical Deep Dive: The Architecture of "AI Mode"

What makes a system an "Automation Engine" rather than a "Chatbot"? It comes down to State and Tool-Native Logic.

Pillar 1: Multi-Step Reasoning Scopes

Unlike a standard chat session, an "AI Mode" session (like in Google’s 2026 rollout) maintains a Dynamic DAG (Directed Acyclic Graph) of tasks.

- When you ask Google AI Mode to "Build a startup landing page," it doesn't just write HTML.

- It creates a task for "Logo Generation," a task for "Copywriting," and a task for "Infrastructure Provisioning."

- If the "Logo Generation" task fails, the system doesn't crash; it reroutes the logic to an alternative model or asks for more user input.

Pillar 2: Persistent Working Sets

The true moat of 2026 GenAI is the Working Set. This is a persistent virtual directory where the AI saves all intermediate artifacts. If you close your browser and come back three days later, the AI "remembers" the specific versions of the files it was working on and can resume the workflow exactly.

7. The Productivity Paradox 2.0: Why Phase 1 Failed

In 2024, CEOs were frustrated. They had spent billions on "AI Copilots," but their bottom line wasn't moving. This was the Productivity Paradox 2.0.

The "Context Switch" Tax

Early GenAI required humans to be the bridge. You got a draft from ChatGPT, then you manually moved it to Word. You got a code snippet, then you manually moved it to VS Code. The time saved in "Generation" was often lost in "Orchestration."

How Phase 2 Solves It

In 2026, the AI moves the data for you. By integrating directly with the "OS of the Enterprise," GenAI has removed the context-switch tax. We are now seeing the first true GDP Lift from AI, specifically in the services and software sectors where "Action-centric GenAI" has replaced up to 40% of administrative overhead.

8. Enterprise Governance: SOC for AI Agents

As AI takes over business processes, the "Trust but Verify" model has become formalized.

The AICPA Agent Audit

In early 2026, the AICPA released the "SOC for Agents" (System and Organization Controls) framework. Enterprises now require their AI vendors to prove:

- Determinism: That the workflow engine will reach the same logical conclusion given the same data.

- Auditability: A clear, human-readable trace of why an agent made a financial or legal decision.

- Isolation: Ensuring that Agent A (Marketing) cannot "Pivot" its logic to access the tools of Agent B (Payroll).

9. The Strategic Horizon: From "Human in the Loop" to "Human as Architect"

As Generative AI matures into a Workflow Engine, the role of the human worker is moving up the stack.

The Rise of the "Process Architect"

The most valuable employees are not the best "writers" or "coders." They are the ones who can Deconstruct a Business Goal into an Automated Flow. They understand the limits of models, the security requirements of data, and the edge cases of human behavior.

The "Integrated Surface" Future

As we saw with Google AI Mode, the very concept of an "app" is disappearing. In the future, you won't "go to an app" to work. Your Personal Workflow Orchestrator will pull the capabilities of multiple apps into a single, goal-oriented interface. The "Dashboard" is being replaced by the "Canvas."

11. Strategic Comparison: OpenAI Operator vs. Claude Computer Use

As of March 2026, the two primary architectures for workflow automation have diverged. If you are an enterprise architect, choosing between them is the most important decision of the year.

OpenAI Operator: The API-First Orchestrator

OpenAI's "Operator" model focuses on Background Process Execution. It is designed to live inside your servers, not your browser.

- Core Vector: Sub-agent delegation. Operator acts as a "General" that spins up 50 tiny models to solve a task in parallel.

- Killer Feature: Hyper-efficient MCP integration. It has native, hard-coded logic for SQL and Python that is faster than any third-party connector.

- Best For: High-volume data processing, ERP management, and complex back-office automation.

Claude Computer Use: The UI-Native Teammate

Anthropic has taken the opposite approach. Claude's "Computer Use" is designed to See and Use the Screen just like a human.

- Core Vector: Visual reasoning. It looks at a screenshot of your legacy desktop software (which has no API), identifies the "Submit" button, and clicks it.

- Killer Feature: "Few-Shot Interaction." You can show Claude a video of a human performing a task once, and it can replicate the visual workflow indefinitely.

- Best For: Bridging legacy systems, complex design tasks, and any workflow that requires "Human-like" visual verification.

12. Monitoring the Engine: The Rise of "Cognitive Observability"

You cannot manage a workflow engine with traditional server logs. In 2026, we have seen the birth of Cognitive Observability (CogObs).

Beyond 200 OK

Traditional logging tells you if a server responded. CogObs tells you why an agent made a decision.

- Trace Analysis: Tools like AgentOps and Langfuse allow you to replay an agent's "Thought Chain."

- Logic Debugging: If an automated workflow stalled, was it because of a network error, or because the model reached a "Logical Paradox" (e.g., two conflicting company policies)?

13. The Future of "Self-Healing" Workflows

The holy grail of 2026 is the Autonomous Repair Loop. In Phase 1, if an automation broke (e.g., a website changed its layout), the human had to fix the script. In Phase 2, the GenAI engine Fixes Itself.

- Detection: The agent identifies that the "Search" button is no longer where it expected it to be.

- Self-Correction: It enters "Exploration Mode," finds the new button, updates its own internal "Tool Mapping," and notifies the human manager: "I've updated the workflow for [Tool X] to account for a UI change. No downtime occurred."

14. Workforce Sociology: Displacement vs. The "Leverage Gap"

We must address the elephant in the room: What happens to the people whose workflows are now 30-40% automated?

The "Leverage Gap"

2026 is seeing a widening "Leverage Gap" between workers who know how to orchestrate these engines and those who don't.

- The Orchestrator: A junior marketer who manages 10 workflow agents is now producing the ROI of a VP.

- The Craftsman: A senior writer who refuses to use the engine is becoming an expensive luxury.

The Shift to "Intent Architect"

The most stable job in 2027 will be the Intent Architect. This role requires 50% business empathy (understanding what the goal is) and 50% technical logic (understanding how to chain agents to reach that goal).

15. The Anatomy of a Workflow Prompt: From Prose to Logic

In 2026, writing a prompt for a workflow engine is more akin to writing a Control Script than a letter. Here are the four requirements for a high-performance workflow prompt.

1. The Contextual State

You must explicitly define the "Before" and "After."

- "Current state: File 'lead_data.csv' exists with 500 rows. Slack token in environment. Success condition: All rows categorized and 'Priority' leads posted to #sales channel."

2. The Tool-use Schema

Don't let the model guess. Define the exact JSON schema of the tools it will touch.

- "Tool:

scrape_profile. Schema:{url: 'string'}. Constraint: Never browse pages behind a login wall."

3. The Error Handling Logic (Explicit Retry)

Tell the agent what to do when things fail.

- "Rule: If a tool returns a 429 error, wait 30 seconds and retry once. If it fails again, log the error to the

workflow_debugfile and move to the next row."

4. The Reflexive Step

Force the agent to verify its own output before the loop continues.

- "Step: Compare the extracted JSON against the raw source text. Identify any discrepancies. Fix them before calling the

committool."

16. Multi-LLM "Waterfall" Architectures: Optimizing for Cost

The most sophisticated workflow engines in 2026 use a Waterfall Model. Instead of sending every task to a $15/1M token model (like GPT-5), we chain models based on the "Cognitive Weight" of the task.

The Stack:

- The Gatekeeper (Qwen3.5-0.8B): Sanitizes inputs and routes the task to the right sub-agent. Cost: Essentially zero.

- The Extractor (Gemini 3.1 Flash-Lite): Pulls structured data from raw documents. Excellent at high-throughput, low-latency parsing.

- The Processor (GPT-5.3 Instant): Performs the heavy logic, decision making, and tool orchestration.

- The Auditor (Claude 3.7): Reviews the final output for compliance and tone.

This waterfall approach reduces the operating cost of a workflow by 80% compared to a single-model architecture.

17. Horizon 2029: The "Global Workflow Mesh"

As we look toward the next three years, we are seeing the emergence of the Workflow Mesh. This is a world where workflows aren't just contained within one company. A "Supply Chain Workflow" at Nike will talk directly to a "Logistics Workflow" at UPS via an Autonomous API Bridge. They will negotiate, optimize and execute without a single human "Application" being opened.

18. The Psychology of Workflow Trust: The "Handoff" Plateau

The final barrier to full-chain workflow automation in 2026 isn't technical—it's psychological. Most organizations hit a "Trust Plateau" when an AI agent requests the power to move money or sign contracts.

The Stages of Trust:

- Observation: "I watch the AI do the task and check its draft."

- Approval: "The AI does the task; I hit the OK button."

- Delegation: "The AI does the task; I review the summary weekly."

- Integration: "The AI owns the outcome; I am notified only by exception."

To move to Stage 4, teams are adopting Probabilistic Safeguards. Instead of a binary "Yes/No," agents provide a Confidence Interval. If an agent is 99% sure but the transaction is >$10k, it automatically downgrades itself to Stage 2. This dynamic trust management is what allows CEO's to sleep while their "Synthetic Fleet" manages the midnight logistics of their empire.

19. Appendix: The 2026 Workflow Vocabulary

To navigate the meetings of 2026, you need to speak the language of the Engine.

- Orchestration Layer: The software that decides which agent gets which task.

- Agentic Drift: When an autonomous workflow slowly moves away from the user's original intent over many iterative loops.

- MCP Handshake: The standardized authentication process where an AI model proves its identity to a secure database.

- Inference Warm-up: The process of pre-loading specialized LoRA adapters into VRAM to prevent the 300ms delay of "Cold-booting" a workflow.

20. Conclusion: The Real AI Revolution Has Only Just Begun

The transition from "GenAI as a Tool" to "GenAI as an Engine" is the defining business phase of this decade. It is the moment where "Digital Transformation" stopped being a PowerPoint slide and started being a real-time, self-optimizing reality.

The valuation of $91B is just the beginning. As these engines move from "Assisting" to "Owning" outcomes, we will see the birth of the first "Zero-Employee Unicorn"—a multi-billion dollar company run entirely by a human founder and a fleet of high-performance workflow engines.

Your business is at a crossroads. You can continue to "Play" with AI, or you can start Building the Engine.

Resources for Strategic Leaders

- The Forrester Wave: Workflow Automation 2026

- Managing Model Reliability (Anthropic Whitepaper)

- The Agentic SDLC: A New Standard