The QTS Water Fight Shows AI Data Centers Need Public Infrastructure Accounting

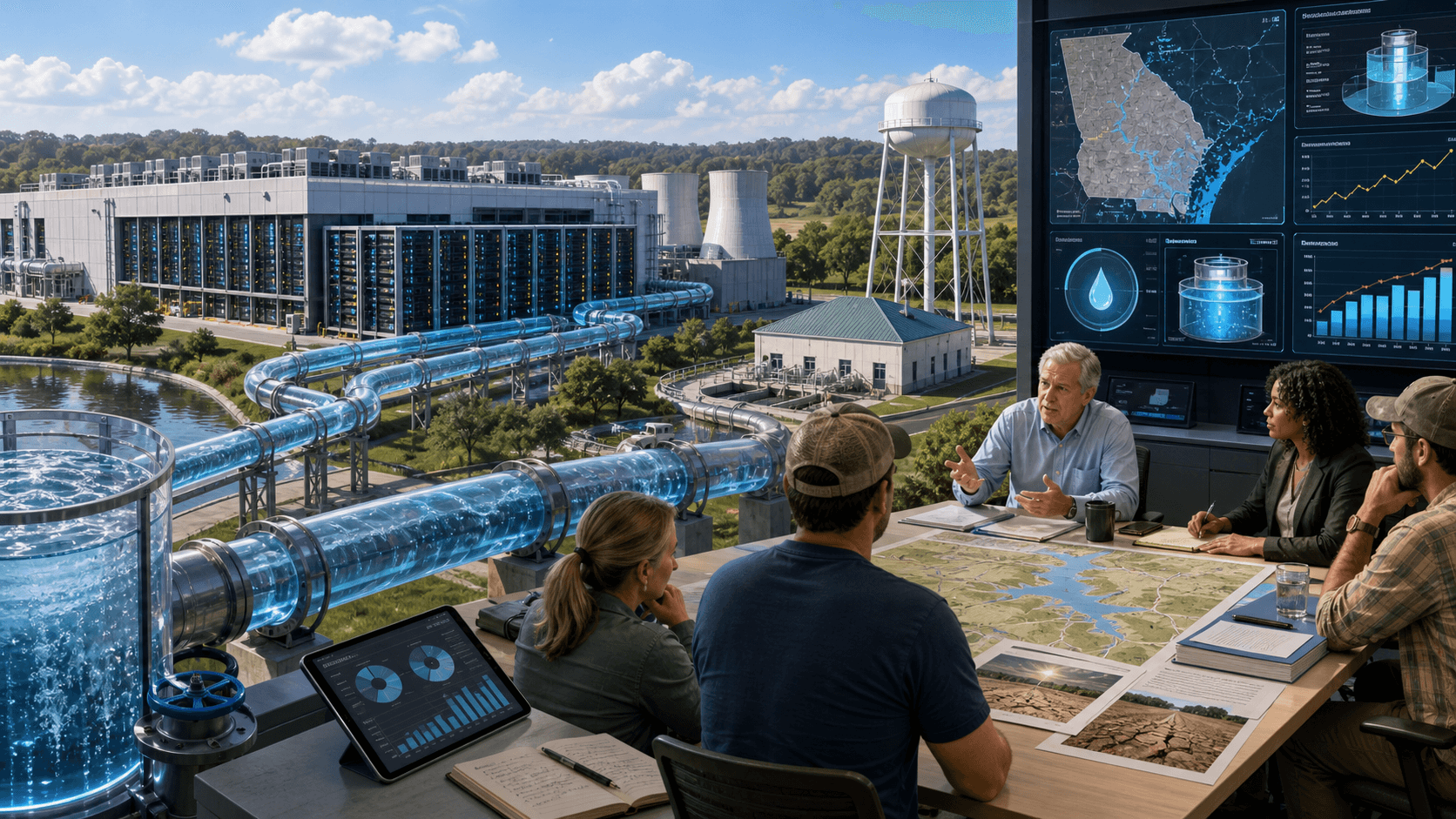

A Georgia data-center water dispute shows why AI infrastructure must make local utility impacts visible before trust collapses.

A Georgia data-center water dispute shows why AI infrastructure must make local utility impacts visible before trust collapses.

Court scrutiny of Sam Altman's outside stakes shows why frontier AI governance now has to account for capital networks.

Colorado lawmakers killed a data-center regulation push, leaving the AI power boom to collide with local energy and water concerns.

OpenAI's Chris Lehane is framing AI as an intelligence utility, raising harder questions for government and business operating models.

EU officials are exploring AI Act simplification as companies warn that complex rules could slow adoption without improving trust.

Anthropic donated Petri, its open-source alignment testing tool, signaling that simulated model audits are becoming shared AI safety infrastructure.

A Chinese court said companies cannot fire workers solely because AI can replace them, creating an early legal signal for AI labor disputes.

China's new campaign against disorder in AI apps highlights filing, security review, training data, and labeling as control points.

EU outreach to Anthropic over Mythos turns frontier AI safety into a live cybersecurity and banking resilience question.

CAISI is reportedly expanding pre-deployment testing with Google DeepMind, Microsoft, and xAI, making frontier model release governance harder to ignore.

U.S. officials are reportedly escalating claims that Chinese AI firms used distillation to replicate American frontier model capability.

NIST’s AI RMF critical infrastructure concept note gives utilities and operators an early map for trustworthy AI risk management.

The Pentagon’s classified AI agreements show how frontier models are moving from demos into defense networks with unresolved governance stakes.