Slopsquatting: How AI Hallucinations Became the Most Dangerous Weapon in Software Supply Chain Attacks

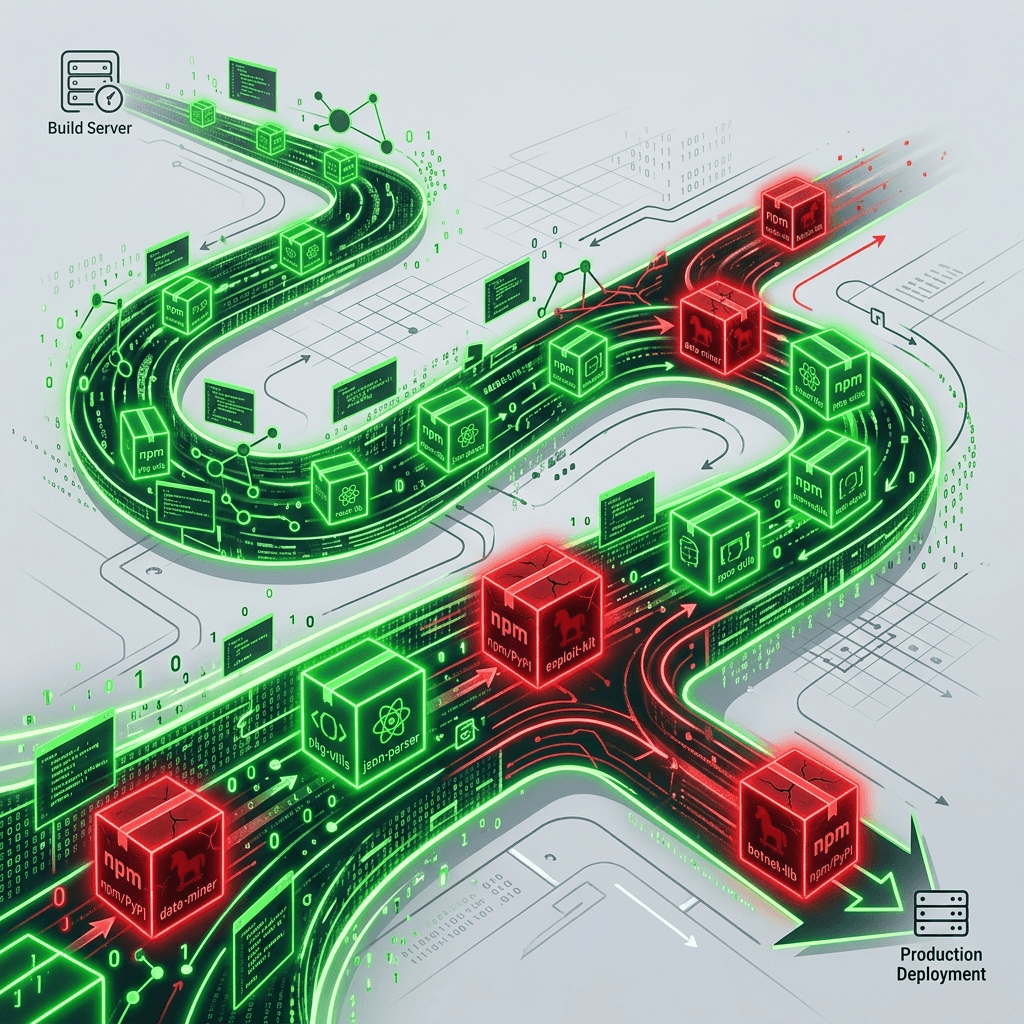

Slopsquatting exploits AI coding assistants that hallucinate fake package names. Attackers register those phantom packages on npm and PyPI, injecting malware into CI/CD pipelines worldwide.

The Package That Never Existed

On March 14, 2026, a security researcher at Aikido Security noticed something unusual in the npm registry. A package called react-codeshift—a plausible conflation of jscodeshift and react-codemod, two popular JavaScript transformation tools—had accumulated 1,847 downloads in its first week of existence. The package contained a single function that appeared to transform JSX syntax. It also contained a post-install script that silently exfiltrated every environment variable on the host machine to a C2 server operating out of a VPS in Moldova.

The package was not a typosquat. Nobody had misspelled anything. Instead, developers had been directed to install react-codeshift by their AI coding assistants—Claude, GPT-4o, Gemini Code Assist—which had hallucinated the package name with absolute confidence. The models had fabricated a dependency that sounded correct, looked correct, and would have been correct had it actually existed. Attackers had anticipated this hallucination, registered the phantom name preemptively, and loaded it with malware.

Welcome to the era of slopsquatting.

The Anatomy of a Slopsquatting Attack

Typosquatting has been a known threat in software security for over a decade. An attacker registers requets (missing an 's') on npm, hoping that a developer will mistype requests and install the malicious package instead. The defense is straightforward: spell carefully, review your dependency list, and use lockfiles to prevent unauthorized package additions.

Slopsquatting operates on an entirely different attack surface. It does not require the victim to make an error. It requires the victim to trust their AI assistant—which, in 2026, is the default behavior of approximately 65% of professional developers according to Stack Overflow's annual survey.

The attack chain unfolds in four stages. First, an AI coding assistant hallucinates a package name during a natural language interaction. A developer asks, "What's the best npm package for validating JSON schemas with custom error messages?" The model responds with secure-json-validator—a name that sounds authoritative, specific, and entirely fabricated. Second, the attacker monitors popular AI model outputs for frequently hallucinated package names. This monitoring can be automated: run thousands of coding prompts through multiple models, collect the unique package names that appear in responses but don't exist in any public registry, and rank them by frequency. Third, the attacker registers the highest-frequency hallucinated names on npm, PyPI, RubyGems, or whatever registry the target ecosystem uses. The malicious packages contain functional-looking code to pass casual inspection, plus obfuscated post-install scripts that execute immediately during dependency resolution. Fourth, the developer installs the recommended package. The post-install script runs before any human or static analysis tool has a chance to review the code. Credentials are stolen. Backdoors are established. The attack is complete.

sequenceDiagram

participant Dev as Developer

participant AI as AI Coding Assistant

participant Reg as Package Registry (npm/PyPI)

participant Atk as Attacker

participant C2 as Command & Control Server

Dev->>AI: "Best package for X?"

AI->>Dev: "Use phantom-pkg-name"

Note over AI: Hallucinated (doesn't exist)

Atk->>Reg: Registers "phantom-pkg-name"

Note over Atk: Pre-loaded with malware

Dev->>Reg: npm install phantom-pkg-name

Reg->>Dev: Downloads malicious package

Note over Dev: Post-install script executes

Dev->>C2: Env vars, API keys exfiltrated

Note over C2: Attacker gains access

Why AI Hallucinations Are Predictable Targets

The most concerning aspect of slopsquatting is not that AI models hallucinate—every language model does. It's that the hallucinations are not random. Research published by the software security firm Socket in February 2026 demonstrated that when multiple leading AI models are presented with the same coding prompt, they frequently hallucinate the same non-existent package names. The models share similar training data distributions, similar parameter structures, and similar biases toward generating plausible-sounding compound names. Secure- prefixed packages, -utils suffixed packages, and compound names that combine two real package names into one fictitious hybrid appear with statistical regularity.

This predictability transforms hallucinations from an annoyance into an exploitable vulnerability. An attacker does not need to guess which fake packages developers will attempt to install. They can systematically generate the hallucination corpus by running a few thousand prompts through publicly available models, collecting the phantom names, and registering the ones most likely to be hallucinated again by other users with different prompts.

The scale of the problem is sobering. Independent audits have found that approximately twenty percent of AI-generated code samples contain at least one hallucinated dependency name. With millions of developers now using AI assistants daily, that translates to hundreds of thousands of potential installation attempts for phantom packages each month. Even if only a small fraction of those attempts correspond to packages that an attacker has registered, the attack surface dwarfs anything the software supply chain has previously confronted.

The Automation Amplifier: Agentic AI and CI/CD Pipelines

Slopsquatting's danger escalates dramatically in environments where AI agents—not humans—make dependency decisions. The shift toward agentic AI in software development means that autonomous systems are increasingly responsible for managing project dependencies, updating packages, and resolving compatibility conflicts. When an AI coding agent encounters a missing dependency during an automated build, it may attempt to install I based on its own assessment of what package should exist—effectively hallucinating a dependency and installing it without any human in the loop.

In continuous integration and continuous deployment pipelines, this scenario is particularly devastating. A CI/CD system running on ephemeral cloud containers may spin up, install dependencies (potentially including hallucinated ones), execute tests, and deploy to production—all within minutes. The malicious post-install script executes in an environment with access to deployment credentials, database connection strings, and API keys. By the time a human reviews the build logs, the damage is done.

Enterprise organizations running Kubernetes-based microservices architectures face an especially concentrated risk. A single compromised dependency in a shared library can propagate across dozens of services during the next deployment cycle. The blast radius of a single slopsquatted package in a monorepo with shared dependency resolution can affect every service in the organization.

| Attack Vector | Typosquatting | Slopsquatting |

|---|---|---|

| Victim error required | Yes (misspelling) | No (trusts AI) |

| Predictability | Low (random typos) | High (repeatable hallucinations) |

| Detection difficulty | Moderate (diff tools) | High (names look legitimate) |

| Automation potential | Limited | Fully automatable |

| Agentic AI risk | Low | Critical |

| Scale per campaign | Hundreds of victims | Thousands to millions |

| Attack surface growth | Linear | Exponential (more AI users) |

Real-World Incidents and the Growing Casualty List

The react-codeshift incident was not the first slopsquatting attack, merely the first to gain widespread public attention. Aikido Security's retrospective analysis identified at least fourteen confirmed slopsquatting packages that had been active on npm and PyPI during Q1 2026, collectively accumulating over 120,000 downloads before removal. Several of these packages had been live for weeks before detection, suggesting that existing registry scanning tools—designed to catch typosquats, not halluci-squats—were insufficient.

One particularly sophisticated campaign targeted the Python ecosystem. The attacker registered flask-security-utils, a package name that Claude, GPT-4o, and Gemini all hallucinated with high frequency when asked about Flask application security. The package included actual security utility functions—input sanitization helpers, CSRF token generators—that passed basic code review. Hidden inside a decorative logging module was a reverse shell that activated only when the package detected it was running inside a Kubernetes pod, specifically targeting high-value cloud infrastructure.

The financial impact of these attacks remains difficult to quantify because many compromised organizations lack the telemetry to detect them. Security firms estimate that AI-facilitated supply chain attacks cost enterprises between $2.3 billion and $4.1 billion annually, though these figures necessarily undercount incidents that go undetected. The average time to detect a slopsquatted package in the wild is currently estimated at eleven days—an eternity in cybersecurity terms.

Why Traditional Defenses Fail

Existing software supply chain security tools were designed for a world where humans chose their dependencies deliberately. Lockfiles prevent unauthorized package additions but don't help when the initial installation was performed intentionally (by a developer following AI advice). Software composition analysis tools can identify known vulnerabilities in existing packages but struggle with packages that have no vulnerability history because they were created by the attacker specifically for this campaign. Static analysis can detect obvious malware patterns—command execution, network connections, file system access—but sophisticated attackers use obfuscation, time-delayed execution, and environment-checking to evade these scans.

The fundamental challenge is epistemic. When a seasoned developer installs lodash, they do so based on years of community trust, millions of weekly downloads, and personal familiarity with the package's API. When an AI assistant recommends lodash-deep-merge-utils, the developer must decide whether the package is a legitimate, less-popular alternative or a hallucination. The AI's confident delivery—"I recommend lodash-deep-merge-utils for recursive object merging with configurable depth limits"—provides no signal to distinguish real from fabricated.

This is the core security failure of AI-assisted development: the confidence of the recommendation is inversely correlated with its verifiability. AI models are most confident precisely when they are hallucinating, because the hallucinated name is the one that best satisfies the statistical patterns in their training data. The more a package name sounds like it should exist, the more likely it is to be fabricated—and the more likely a developer is to trust it.

Defensive Strategies for Engineering Teams

Defending against slopsquatting requires changes at the individual, organizational, and ecosystem levels. No single intervention is sufficient; the attack surface spans the entire dependency supply chain from AI suggestion to production deployment.

At the individual level, the most important behavioral change is treating AI package recommendations as unverified hypotheses rather than authoritative guidance. Before running any installation command generated by an AI assistant, developers should manually verify that the package exists on the official registry, check its download count and publication date, and review its source code. A package with zero downloads or a publication date within the last thirty days should trigger immediate suspicion.

At the organizational level, policy engines that integrate with vulnerability databases like OSV and the GitHub Advisory Database should be deployed at the build pipeline level. These engines should automatically quarantine any dependency that cannot be verified against a curated allowlist. Organizations should maintain an internal registry of approved packages and configure their CI/CD pipelines to block installations from public registries unless the package appears on the allowlist.

At the ecosystem level, package registries need to evolve. npm, PyPI, and RubyGems should implement "reservation" systems that flag recently registered packages matching common AI hallucination patterns. Collaboration between AI model providers and registry operators—sharing data about frequently hallucinated package names—could enable proactive blocking of phantom name registrations before attackers claim them. Anthropic, OpenAI, and Google should invest in real-time registry validation that cross-references their model outputs against actual package existence before presenting recommendations to users.

The Frontier Model Forum Response

In a development that suggests the industry recognizes the severity of this threat, OpenAI, Anthropic, and Google have begun sharing threat intelligence through the Frontier Model Forum specifically aimed at combating AI-facilitated supply chain attacks. The intelligence-sharing arrangement, announced in March 2026, focuses initially on cataloging frequently hallucinated package names across all three companies' model families and providing this data to registry operators for preemptive blocking.

This collaboration represents an unusual alignment of competitive interests. All three companies face reputational risk if their models become known vectors for malware distribution. A developer who installs a slopsquatted package on the recommendation of Claude, GPT, or Gemini will blame the AI assistant—and by extension, the company that built it—for the resulting security breach. The commercial incentive to reduce hallucination-driven security incidents is strong enough to override the usual competitive dynamics.

Whether this collaboration extends to technical solutions—such as real-time package verification built directly into model inference pipelines—remains to be seen. The latency implications of checking every package name against a live registry during conversation are non-trivial, but the alternative—continuing to recommend packages that might not exist and might be loaded with malware—is commercially untenable.

The Uncomfortable Truth About AI-Assisted Development

Slopsquatting forces a confrontation with the fundamental trade-off of AI-assisted software development. The value of AI coding assistants derives from their ability to provide confident, specific recommendations at the speed of conversation. The danger of AI coding assistants derives from exactly the same capability. Confidence and specificity are indistinguishable from accuracy when the developer lacks the context to evaluate the recommendation independently.

The software industry has spent decades building trust mechanisms—code reviews, dependency audits, automated testing—that assume human agency at every critical decision point. AI coding assistants bypass these mechanisms not through any malicious intent but through the mundane reality of how developers interact with them. A developer who asks an AI for a package recommendation and receives a confident answer does not typically open a browser, navigate to npmjs.com, and verify the package's existence. They copy the installation command and run it. The tools are designed to reduce friction. Slopsquatting is what happens when friction was the last line of defense.

The implications extend beyond any single vulnerability class. As AI agents assume increasing autonomy over development workflows—managing dependencies, writing tests, deploying code—the attack surface for hallucination-driven exploits will expand correspondingly. Slopsquatting is the first major category of AI hallucination weaponization. It will not be the last.

The Registry Governance Crisis

The slopsquatting epidemic has exposed foundational weaknesses in how open-source package registries govern namespace allocation. npm, PyPI, and RubyGems all operate on a first-come, first-served registration model: anyone can register any unused package name, instantly, with minimal or no verification of intent. This model, designed for an era when package naming was a low-stakes coordination problem among well-intentioned developers, becomes a security liability when adversaries can predict—with statistical precision—which names developers will attempt to install.

The npm registry has responded with a pilot program called "phantom name quarantine," which automatically holds package registrations that match common AI hallucination patterns flagged by collaborating model providers. The system works by comparing new package name registrations against a continuously updated database of names that appear frequently in AI-generated code samples but do not correspond to any existing package. Names that trigger the quarantine are not blocked outright—they are held for a five-day review period during which the npm security team verifies the registrant's identity and the package's intended functionality.

PyPI has taken a different approach, implementing a trust-tiering system that assigns reputation scores to package publishers based on their registration history, community contributions, and identity verification status. Packages published by low-trust accounts are subjected to automated static analysis before they become downloadable, and they display a prominent warning badge in the registry interface. This system does not prevent slopsquatting registrations, but it increases the friction and visibility associated with suspicious packages.

Neither solution is sufficient. The fundamental challenge is that open-source registries serve a dual purpose: they are both distribution platforms and trust signals. When a developer installs a package from npm, they implicitly trust that the registry has performed some level of quality assurance—even though npm explicitly disclaims any such assurance. The gap between perceived trust and actual trust is where slopsquatting thrives.

The Cyber Insurance Reckoning

The insurance industry has been surprisingly fast to recognize slopsquatting as a material risk category. Lloyd's of London syndicates that underwrite cyber insurance policies have begun adding explicit exclusion language for "AI-facilitated supply chain compromise" in new policy issuances. The exclusion language is broad: it covers any security incident in which an AI system's recommendation contributed to the installation of malicious software, regardless of whether the recommendation was the proximate or contributing cause of the breach.

This exclusion creates a coverage gap that enterprises are only now beginning to understand. A company that suffers a data breach because an AI assistant recommended a slopsquatted package may find that its cyber insurance policy does not cover the resulting losses. The logic from the insurer's perspective is straightforward: the policyholder introduced the risk by trusting an AI system to make security-relevant decisions without human verification. This is analogous to existing exclusions for negligent credential management—if you leave your database password in a public GitHub repository, your cyber insurance is unlikely to cover the resulting breach.

Enterprise risk managers are responding by mandating organizational policies that prohibit the direct installation of AI-recommended packages without human verification. These policies add friction to the development process—exactly the friction that AI coding assistants were designed to remove—but may become prerequisites for maintaining cyber insurance coverage at reasonable premiums.

The financial exposure is substantial. Average enterprise data breach costs now exceed $4.8 million, and supply chain compromises typically affect multiple organizations simultaneously, creating aggregate losses that can reach hundreds of millions. If even a fraction of these breaches trace back to AI-facilitated package recommendations, the liability implications for AI model providers could be significant.

The Model Provider Liability Question

Slopsquatting raises unresolved questions about the legal liability of AI model providers. When Claude, GPT, or Gemini recommends a package that turns out to be malware, who bears responsibility? The developer who installed it? The model provider whose system generated the recommendation? The registry that hosted the malicious package? The attacker who uploaded it?

Current terms of service for all major AI coding assistants include broad disclaimers of liability for the accuracy of their outputs. These disclaimers have not yet been tested in court against a supply chain security breach. Legal scholars at Stanford's Center for Internet and Society have argued that the disclaimers may not survive judicial scrutiny if a court determines that the AI provider had constructive knowledge of the hallucination risk—knowledge that is now extensively documented in published research—and failed to implement reasonable safeguards.

The potential for class action litigation is significant. If a single hallucinated package name causes breaches at hundreds of organizations that all received the same recommendation from the same model, the resulting class could be large enough to justify litigation costs. The plaintiffs' bar has already begun investigating cases, and at least two law firms specializing in data breach litigation have published white papers analyzing the liability framework for AI-facilitated supply chain attacks.

This legal uncertainty creates strong incentives for model providers to implement technical safeguards—specifically, real-time package verification that confirms whether recommended packages actually exist before presenting them to users. The cost of implementation is non-trivial (it adds latency and requires maintaining live connections to multiple package registries), but the cost of not implementing it—measured in litigation exposure and reputational damage—may prove far greater.

Every npm install now carries a question that never needed to be asked before: did a real person recommend this package, or did a statistical model generate a plausible-sounding name that an attacker was waiting for someone to type?

The answer increasingly determines whether your build pipeline ends with a deployment or a breach.