Beyond the Prompt: The Rise of Stateful Agency and Longitudinal AI Goals

In 2026, the 'Prompt' is obsolete. Leading enterprises have moved to 'Stateful Agency,' where agents manage multi-week goals autonomously.

If you are still talking about "Prompt Engineering" in April 2026, you are living in the past. In the last six months, the fundamental paradigm of how humans interact with artificial intelligence has shifted. We have moved from Stateless Interactions (one-off questions and answers) to Stateful Agency (autonomous mission execution).

The "Prompt" is being replaced by the "Mission Brief." In this new era, you don't ask an AI to write a piece of code; you brief an agent swarm on a business objective—such as "Build and deploy a localized marketing site for our Paris launch"—and then you step back. The agents maintain state, manage their own memory, and work for days or weeks until the mission is complete. This is the transition from "Chat" to "Commitment."

The Death of the "Chat" Interface

The "Chatbot" was a bridge, not a destination. For nearly four years, we forced ourselves into a conversational mimicry that was fundamentally inefficient for complex work. Chat is linear; work is hierarchical, multi-threaded, and recursive. The friction of having to "hand-hold" an AI through every small sub-step of a project led to "Prompt Fatigue"—the point where the effort of managing the AI exceeded the effort of doing the work yourself.

Stateful Agency solves this by giving the AI a "Persistent Context Store." Unlike a ChatGPT session that forgets everything once the window is closed, a Frontier Agent in 2026 lives in a persistent environment.

The Architecture of Agentic Memory

To function over weeks, these agents utilize a tiered memory system that mimics human cognition:

- Episodic Memory (The Short-Term Log): A high-fidelity record of every decision, tool call, and error experienced during the current mission. This allows the agent to "backtrack" if it hits a dead end without repeating its mistakes.

- Semantic Memory (The Long-Term Knowledge): A vector-indexed repository of the project’s specific constraints, documentation, and history. If an agent is working on a codebase it hasn't seen in six months, it "re-reads" the semantic memory to regain context.

- Procedural Memory (The Skill Registry): A catalog of "Skills"—automated scripts, API integrations, and logic patterns—that the agent has mastered. When a new challenge arises, the agent first queries its procedural memory for an existing "Skill" before attempting to invent a new solution.

From ReAct to Orchestrated Swarms

In 2024, "agentic behavior" was mostly achieved through the ReAct (Reason + Act) pattern. The model would think, call a tool, and then process the result. While revolutionary, ReAct was brittle. If a tool call failed or returned unexpected data, the agent would often "spiral"—repeating the same faulty thought process until it hit a token limit.

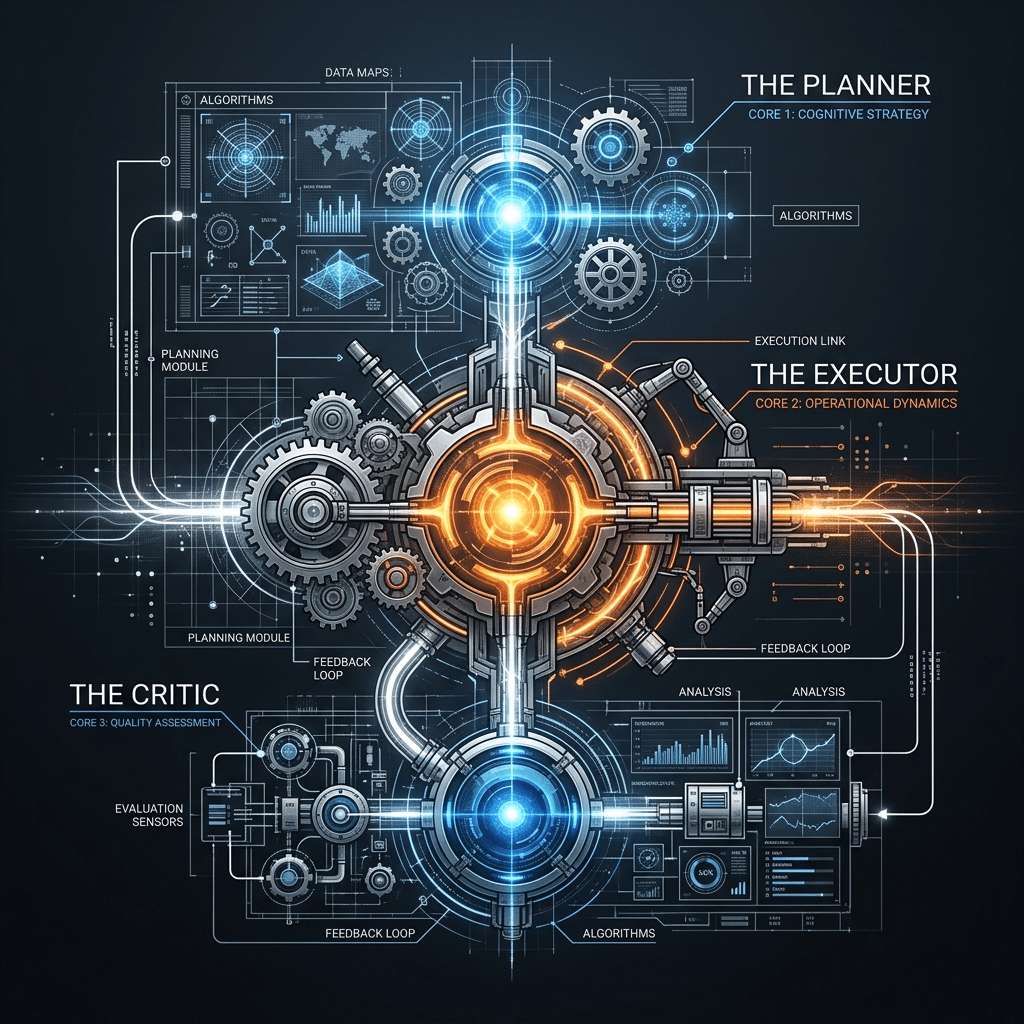

The 2026 standard is Orchestrated Swarm Dynamics. Instead of one "God Agent" trying to handle everything from database design to high-level strategy, tasks are handled by specialized sub-agents governed by a Capstone Strategy Agent.

The "Mission Brief" Lifecycle

When a human orchestrator submits a Mission Brief, the Capstone Agent performs a Recursive Decomposition:

- Step 1: Strategic Planning: The Capstone uses a high-reasoning model (like GPT-5.5 Pro) to break the mission into 5-10 distinct Work Streams (e.g., UI, Backend, Content, Security).

- Step 2: Resource Allocation: It allocates a "Token Budget" and a "Compute Budget" to each stream.

- Step 3: Orchestration: It manages the communication between sub-agents. If the UI Agent needs to know the API endpoint from the Backend Agent, the Capstone facilitates that "handshake" without human intervention.

- Step 4: Continuous Verification: A separate "Critic Agent" audits every successful sub-task against the original Mission Brief, ensuring the swarm doesn't "drift" from the objective over time.

Case Study: The Disruption of BPO (Business Process Outsourcing)

The most dramatic economic impact of Stateful Agency is the total restructuring of the BPO industry. In 2025, companies paid billions to outsourcing firms in India and the Philippines to handle Tier-2 customer support and back-office data entry.

In April 2026, these firms are being replaced by "Task-as-a-Service" (TaaS) providers. Instead of hiring 1,000 human agents, a bank can now deploy a "Support Swarm" of 10,000 stateful agents.

- The Difference: These agents don't just "talk" to customers. They have the stateful authorization to issue refunds, update records, and investigate fraudulent transactions across multiple days—handling the entire "ticket lifecycle" autonomously.

- The Result: Customer resolution times have dropped from 24 hours to 45 seconds, while the cost-per-resolution has fallen by 98%.

Technical Breakdown: Stateless vs. Stateful Architecture

| Feature | Stateless (2024-2025) | Stateful (2026) |

|---|---|---|

| Persistence | None (forgotten after chat) | Persistent Vector + Graph DB |

| Logic Loop | ReAct (Brittle) | Orchestrated Swarms (Resilient) |

| Context Management | Human-Led | Self-Managing (Tiered Memory) |

| Costing | Per Thousand Tokens | Per Mission Component |

| Reliability | 60-70% | 99%+ (via Recursive Criticism) |

| Primary Interaction | "Write a Python script for..." | "Maintain this service for 30 days..." |

The "State Dashboard": The New UI of Work

If we are no longer chatting with AI, how do we monitor it? The "Chat Box" has been replaced by the "State Dashboard." This is the visual language of the Agentic Orchestrator.

- The DAG (Directed Acyclic Graph) View: A live, three-dimensional chart showing every task the swarm is currently performing. You can click on any node to see the specific reasoning chain of the agent performing that task.

- The "Attention Radar": A heatmap showing which parts of the mission are taking the most compute. If the "Security Audit" node is glowing red, the human knows the agents are struggling with a complex vulnerability.

- The "Human-in-the-Loop" Queue: When a sub-agent hits an ethical boundary—such as "Do I have permission to delete this old user database?"—it pauses that specific workstream and places a request in this queue. The human doesn't do the work; they provide the Intent and Authorization.

The Societal Shift: The Death of Junior Roles?

The rise of the "Departmental Agent" has sparked a fierce debate about the future of entry-level work. If an agent swarm can handle the "grunt work" of a junior lawyer, developer, or analyst—and do it 100x faster with 10x better accuracy—what happens to the talent pipeline?

In 2026, the consensus is that "Junior" is no longer a job title; it is a learning phase for Orchestration. Young professionals are no longer hired to do the work; they are hired to manage the swarm. The skills required in 2026 are not "How to write a function," but "How to audit an agent's reasoning" and "How to define a mission brief that doesn't lead to unintended consequences."

Infrastructure: The "Always-On" Inference Era

The shift to Stateful Agency has required a massive change in cloud infrastructure. Traditional "Serverless" AI—where you pay for a single request—is being replaced by "Persistent Inference Instances."

Cloud providers now offer dedicated clusters of "Agentic Silicon" (like the Google TPU v7 or AWS Trainium 3) that keep the model’s weights "warm" and its context memory "live" for the duration of a mission. This eliminates the latency of reloading 128k context memories for every turn and allows agents to respond to external events (like a server crash or a customer request) in real-time.

Mermaid: The Orchestrated Swarm Logic

graph TD

A[Human Mission Brief] --> B[Capstone Strategy Agent]

B --> C[Persistence Engine: State + Memory]

C --> D{Worker Swarm}

D --> E[Sub-Agent: Research]

D --> F[Sub-Agent: Execution]

D --> G[Sub-Agent: QA/Test]

E --> H[Tool: Web/API]

F --> I[Tool: Code/Write]

G --> J[Tool: Sandbox/Run]

H --> C

I --> C

J --> C

C -->|Progress Update| K[State Dashboard]

C -->|Goal Achieved| B

B -->|Final Result| L[Human Orchestrator]

L -->|Clarification/Auth| B

Conclusion: The Horizon of Goal-Seeking AI

Stateful Agency is the final piece of the puzzle. We have spent decades building "Computers that calculate" and "AI that generates." We are finally building "Machines that achieve."

A goal-seeking machine is a fundamentally different entity than a text-generating one. It requires trust, it requires ethical guardrails, and it requires a new type of human leadership. As we move into the second half of 2026, the companies that thrive will be those that stop "prompting" their AI and start "briefing" their agents.

The mission is live. The swarm is ready. The era of the prompt is over.