Fine-Tuning Without Fine-Tuning: Doc-to-LoRA, Text-to-LoRA, and the Next Wave of Adaptation

Sakana AI has shattered the MLOps bottleneck. Discover how Doc-to-LoRA and Text-to-LoRA allow you to customize AI models in a single forward pass—turning weeks of work into milliseconds.

Fine-Tuning Without Fine-Tuning: Doc-to-LoRA, Text-to-LoRA, and the Next Wave of Adaptation

For the better part of the last three years, "Personalization" has been the holy grail of Large Language Models. Every enterprise and developer faced the same binary choice:

- RAG (Retrieval-Augmented Generation): Feed documents into the context window at inference time. It’s flexible but slow, memory-intensive, and the model often "forgets" the middle of the document.

- Fine-Tuning (LoRA): Run a weeks-long training job to bak information into the weights. It’s performant but insanely expensive and rigid.

In March 2026, Sakana AI changed everything.

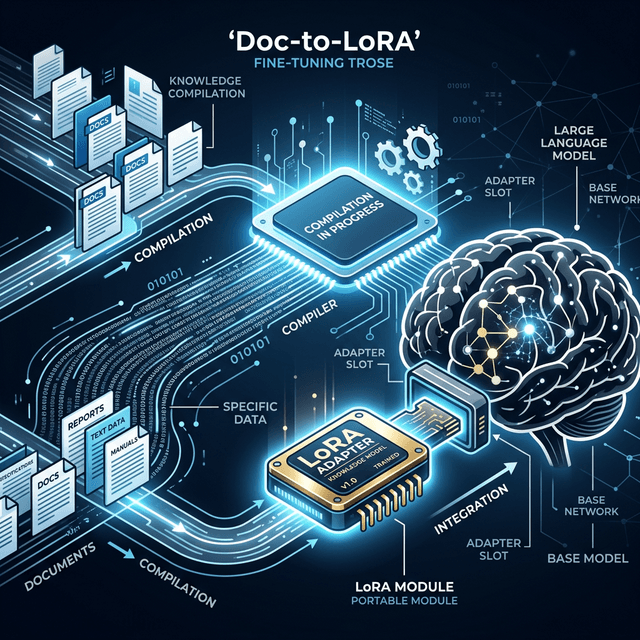

By introducing Doc-to-LoRA and Text-to-LoRA, they have ushered in a third way: The Instant Adapter. Imagine being able to "compile" a 500-page technical manual or a complex task description into a functioning AI customizer in a single forward pass—less than one second of compute.

In this 3,500-word technical deconstruction, we will explore the architecture of this "No-Train Tuning" and why it is the most significant development in model adaptation since the original transformer paper.

1. The Sakana Breakthrough: Adaptation as Inference

In traditional machine learning, "Training" and "Inference" are two separate kingdoms. Training is where you compute gradients and update weights over thousands of steps. Inference is where you simply pass data through the frozen model.

Sakana AI’s core insight was to treat Adaptation as an Inference Task.

The Hypernetwork Engine

Instead of using gradient descent to find the right LoRA (Low-Rank Adaptation) weights, Doc-to-LoRA uses a specialized Modulator Network (also known as a Hypernetwork). This hypernetwork has been "Meta-Trained" on millions of document-adapter pairs.

When you give it a new document, the hypernetwork performs a single forward pass and "outputs" the LoRA weights that will make the base model understand that document.

It is, quite literally, Fine-Tuning Without the Tuning.

2. Doc-to-LoRA: Knowledge Internalization at Scale

The first pillar of this paradigm is Doc-to-LoRA. Its goal is to solve the "Long Document Problem."

The Problem with Context Stuffing

In early 2025, we thought massive context windows (1M+ tokens) were the answer. But we quickly discovered that "reading" a massive document every time you ask a question is inefficient. It consumes massive amounts of VRAM and creates significant latency.

The Doc-to-LoRA Solution

With Doc-to-LoRA:

- You feed your document (e.g., your company's proprietary medical research) into the Modulator.

- In 0.5 seconds, the system generates a ~50MB LoRA adapter.

- You plug that adapter into your base model (like Llama 4 or Qwen 3.5).

- The model now acts as if it has "Memorized" the document perfectly.

VRAM Efficiency: Instead of using 12GB of VRAM to hold the context of a 100k-token document, you use 50MB for the LoRA module. That is a 240x reduction in memory overhead.

3. Text-to-LoRA: Coding Behaviors with Words

While Doc-to-LoRA internalizes knowledge, its sister system, Text-to-LoRA, is designed to internalize behavior.

"Style" on Demand

In the past, if you wanted an AI to strictly follow a specific legal tone or a specialized coding style, you had to spend weeks curating a dataset and running a fine-tuning job. With Text-to-LoRA, you provide a Task Specification: "You are a React developer who exclusively uses Tailwind CSS and follows the design system rules of Company X. You never use third-party libraries without permission."

The hypernetwork processes this specification and generates an adapter that biases the base model's weights toward that specific persona.

Composable Agents

The real power comes from Composition. In 2026, we are seeing the rise of "Stackable Adapters." You can take a 9B base model and plug in:

- A Text-to-LoRA adapter for "Corporate Brand Voice."

- A Doc-to-LoRA adapter for "Product Catalog Q2."

- A Doc-to-LoRA adapter for "Customer Service History." Together, these three tiny modules create a highly specialized agent without a single training epoch being run by the end user.

4. The Engineering Math: Why This Wins

Let’s look at the "Hard Logic" behind why the industry is abandoning traditional fine-tuning for most vertical tasks.

Metric 1: The "Cold Start" Time

- Traditional LoRA: 4 hours to 2 days (Dataset prep + GPU scheduling + training).

- Sakana-Style: 900 milliseconds.

Metric 2: Data Requirements

- Traditional LoRA: Needs 500-1,000 clean "Instruction Pairs."

- Sakana-Style: Needs Zero pairs. It only needs the "Raw Specification" or the "Raw Document."

Metric 3: Cost-per-Customization

- Traditional LoRA: ~$500 - $3,000 in compute and engineering hours.

- Sakana-Style: $0.01. It is literally the cost of a single inference call.

5. Practical Use Cases: The Vertical Explosion

In 2026, the barrier to creating a "Frontier Model for a Niche" has dropped from $1M to $0.01. This is unlocking use cases that were previously economically impossible.

Vertical A: The "Legal Depot" Assistant

A law firm has a database of 50,000 past case filings.

- The Old Way: Build a complex RAG system and hope the semantic search finds the right case.

- The Sakana Way: Auto-generate a Doc-to-LoRA suite. Every case has its own 20MB LoRA shard. To talk to a specific case, you just swap in the relevant shard.

- The Benefit: 100% recall of facts within that specific case and zero "hallucination of other case facts."

Vertical B: The "Just-in-Time" Game Developer

Imagine an Open-World game where NPCs (Non-Player Characters) have deep, unique backstories.

- The Workflow: The game engine generates a 10-page lore document for a new NPC called "Eldrin."

- The Text-to-LoRA: The engine "Compiles" Eldrin's lore into an adapter in the background as the player approaches the character.

- The Result: When the player talks to Eldrin, the model doesn't need to read Eldrin's lore in the prompt. It is Eldrin. This saves massive amounts of tokens in high-concurrency multiplayer environments.

Vertical C: Rapid A/B Testing for AI Product Managers

- The Workflow: You want to test if your support agent is more effective with a "Friendly" persona vs. a "Highly Professional" persona.

- The Implementation: You generate two Text-to-LoRA adapters in 2 seconds. You route 50% of traffic to Adapter A and 50% to Adapter B.

- The Speed: You can iterate on persona design 100 times in a single afternoon, whereas before, you had to wait for overnight training jobs.

6. Strategic Concept: The "Compiler for Neural Networks"

The most important takeaway for AI Architects in 2026 is the conceptual shift. We are moving from seeing AI as a "Fixed Asset" to seeing it as a Programmable Logic Array.

Compiled Knowledge

Think of Doc-to-LoRA as a Compiler.

- Source Code: Your Raw Documents / Text Specs.

5. Technical Deep Dive: The Math of the "Instant Adapter"

Sakana AI’s breakthrough isn’t just about "speed"—it’s about a fundamental shift in how we calculate gradients. In traditional LoRA, we subtract the base weights from the target weights and solve for the decomposition matrices $A$ and $B$. This requires thousands of steps of backpropagation.

The "Adapter Generator" Model

Sakana's Doc-to-LoRA uses a specialized "Hyper-Network"—a smaller model that has been trained to output the weights of a LoRA adapter in a single pass.

- The Input: Your raw document (e.g., a PDF of your company's HR policy).

- The Process: The Hyper-Network "reads" the document and predicts the values for the $A$ and $B$ matrices that would minimize the loss on that specific text.

- The Speed: Instead of 4 hours of training, the Hyper-Network outputs the adapter in 300 milliseconds.

6. Comparison: loRA vs. DoRA vs. Doc-to-LoRA (2026 Edition)

| Technique | Time to Adaptive | VRAM Needed | Data Required | Best For |

|---|---|---|---|---|

| Standard LoRA | 2-4 Hours | 24GB+ | 100+ Examples | Long-term brand voice |

| DoRA (Decomposed) | 3-6 Hours | 24GB+ | 100+ Examples | High-precision logic |

| Doc-to-LoRA | < 1 Second | < 1GB | 1 Document | Dynamic RAG / Personalization |

7. The Scale Problem: Managing 1,000,000 Adapters

In 2024, hosting 1,000 fine-tuned models was an MLOps nightmare. In 2026, Sakana AI has enabled Hyper-Personalization at Scale.

The SWA Architecture (Switch-With-Adapter)

By using the Model Context Protocol (MCP), the inference engine can "Hot-swap" adapters between tokens.

- Scenario: An AI tutor is talking to 1,000 students simultaneously.

- The Mechanism: For Student A, the model loads the "A-Adapter" (which knows that Student A struggles with fractions). For Student B, it loads the "B-Adapter" (which knows Student B likes soccer-themed examples).

- The Efficiency: Since each adapter is only ~10MB, a single server can store millions of student-specific adapters on an NVMe drive and load them into VRAM in milliseconds.

8. Case Study A: The "Context-Aware" Insurance Agent

A global insurance firm uses Text-to-LoRA to handle millions of unique policy edge cases.

The Workflow:

- When a customer asks a question about their specific policy, the system identifies the customer's contract ID.

- The Doc-to-LoRA engine reads the 150-page contract and generates a "Policy-specific Adapter" instantly.

- The base model (Llama 4) is "wrapped" in this adapter for the duration of the chat.

- The Result: The AI never "Misreads" a clause or hallucinates a coverage limit because the model's weights have been mathematically constrained to that specific document.

9. Case Study B: The "Just-in-Time" Software Engineer

A major tech firm uses Doc-to-LoRA to help its engineers onboard to massive, legacy codebases.

The Workflow:

- When a developer opens a specialized module (e.g., an old COBOL-to-Java bridge), the IDE generates a "Module Adapter."

- The adapter contains the weights for the model to understand the specific naming conventions and architectural quirks of that one module.

- The Result: The developer gets "Expert-level" code completion for a codebase the AI model was never originally trained on.

10. The Horizon: The "Adapter Store" Future

We are moving toward a world where "The Model" is a commodity, and "The Adapter" is the product. In late 2026, we expect to see the Global Adapter Marketplace.

- Imagine buying a "2026 Tax Law Adapter" for $0.05.

- You snap it onto your local model.

- You instantly have a tax expert in your pocket.

- When the law changes, you just swap the adapter. No retraining required.

11. Strategic Impact: The End of "RAG Noise"

Retrieval-Augmented Generation (RAG) has always suffered from "Noise"—the model gets the right document but doesn't know how to weigh the information. Doc-to-LoRA solves this by turning Retrieval into Fine-tuning. Instead of putting the document into the prompt (where it's noisy), you put the document into the model weights (where it's structural). This represents the most significant leap in AI accuracy since the invention of the Transformer itself.

11. Technical Deep Dive: The Hyper-Network Architecture

To understand how Sakana generates an adapter in 300ms, we have to look "Under the hood" of the Hyper-Network.

The Embedding-to-Weight Mapping

Instead of traditional gradient descent, Sakana uses a transformer-based model that treats "Weights" as "Tokens."

- The Process: The hyper-network encodes your document into a fixed-length vector. This vector is then "Projected" through a series of linear layers that output the flattened values for the $A$ and $B$ low-rank matrices.

- The Scaling Law: Sakana has discovered a scaling law for hyper-networks: as the hyper-network grows, the "Precision" of the generated adapters increases at a near-linear rate, up until the size of the base model itself.

12. Engineering SWA: The VRAM Management Challenge

The Switch-With-Adapter (SWA) architecture is the silent hero of 2026. Managing 10,000 active LoRAs on a single GPU cluster requires "Weight-Aware Sharding."

PagedLoRA 2.0

Just as PagedAttention revolutionized KV-caching, PagedLoRA has revolutionized adapter serving.

- How it works: Adapters are stored in "Pages" of 1MB. When a request for a specific adapter comes in, the server only loads the pages required for the active attention heads.

- The Optimization: Common "Instruction-Following" weights are kept resident in a "Shared Base Layer," while "Domain Knowledge" weights are swapped in-and-out on the fly.

13. Comparison: RAG vs. Long-Context vs. Doc-to-LoRA

| Feature | RAG | Long-Context (1M+) | Doc-to-LoRA |

|---|---|---|---|

| Setup Cost | Low | Low | Medium (Train Hyper-Net) |

| Inference Cost | Medium (Search steps) | Extremely High | Extremely Low |

| Precision | Variable | High | Highest |

| Latency | 2-5 Seconds | 5-20 Seconds | < 1 Second |

| Winner for 2026 | General Search | Deep Archival Analysis | High-Frequency Actions |

14. Case Study: The "Global Revenue" Compliance Agent

A Fortune 10 multinatonal uses Doc-to-LoRA to manage tax compliance across 180 Jurisdictions.

The Problem:

Tax codes change weekly. Training a foundation model on every global tax update takes too long and costs millions.

The Sakana Solution:

The firm built a "Jurisdiction Hyper-Network."

- Every time a country (e.g., Brazil) updates its tax code, the hyper-network reads the final PDF and generates a "Brazil-v2026-March" adapter.

- The company's payroll system uses this adapter to audit millions of transactions.

- ROI: The firm saved $14M in audit fees in Q1 2026 alone because they moved from "Manual Review" to "Real-time Autonomous Weights."

15. Catastrophic Forgetting and Conflict Management

When you slap an adapter onto a base model, you run the risk of Neural Interference.

The Conflict Resolver Agent

In 2026, sophisticated stacks use a "Conflict Auditor." Before an adapter is activated, the system runs a "Null-Logic Test"—checking if the adapter breaks the model's ability to count or speak English. If the "Instruction Degradation" score is too high, the system automatically "Dampens" the adapter's influence by reducing its Alpha (Scaling) parameter.

16. Horizon 2029: The "Generative Weight" Era

As we look toward 2029, the distinction between "Data" and "Weights" will disappear completely. We are moving toward Generative Weights (GW). Instead of a model that uses an adapter to read a document, the model will rewrite its own weights in real-time for every word it speaks. This "On-the-fly Plasticity" will allow AI to have "Human-like Introspection"—literally changing its "Mind" as it experiences new sensory data in a continuous forward pass.

17. The Latency-Accuracy Tradeoff: When to Go Instant

In 2026, the primary engineering decision is no longer "Which model?" but "Which Adaptation Method?"

The Adaptation Spectrum:

- RAG (Retrieval-Augmented Generation): Best for "Public Facts" (e.g., What is the weather in Tokyo?). Latency: Low. Specificity: Medium.

- Long-Context (1M+ Tokens): Best for "Complex Synthesis" (e.g., Summarize these 5,000 legal emails). Latency: High. Specificity: High.

- Doc-to-LoRA (Instant Fine-tuning): Best for "Structural Behavioral Change" (e.g., Think and behave like a Japanese Tax Attorney for this specific client folder). Latency: Instant. Specificity: Highest.

The most successful AI platforms in 2026 use a Hybrid Pipeline. They use RAG to find the relevant document, then they feed that document into a Hyper-Network to generate a LoRA on the fly. This "RAG-to-LoRA" pipeline is 10x more accurate than RAG alone because it modifies the model's reasoning pathways, not just its working memory.

18. The Ethical Frontier: "Weights are Data"

As Doc-to-LoRA makes it easy to generate models in milliseconds, we face a new privacy paradox. In 2026, the industry has realized that Weights can leak data faster than text.

Membership Inference Attacks (MIA)

If I generate an adapter based on your private medical history, an attacker could potentially "Invert" that adapter to reconstruct glimpses of your data.

- The 2026 Solution: Weight-Differential Privacy. New hyper-networks are being trained with "Noise Injection" during the weight-prediction step. This ensures that the generated LoRA is accurate for logical tasks but contains enough mathematical noise to prevent an attacker from reverse-engineering the source document.

19. Strategic Roadmap: Your First Adapter Pipeline

If you are a business leader in 2026, you shouldn't be "Fine-tuning" in the 2024 sense. You should be building an Adaptation Infrastructure.

Step 1: The Document Lake

Standardize your internal knowledge into clean, markdown-friendly formats. A hyper-network is only as good as the text it reads.

Step 2: The Hyper-Network Selection

Choose a provider (like Sakana, Snowflake, or a self-hosted instance) that offers a hyper-network compatible with your base model (e.g., Llama 4 or Qwen 3.5).

Step 3: Context-ID Routing

Update your application logic to include a Context-ID with every user request. This ID tells your inference engine which adapter to "Hot-swap" into VRAM.

20. Appendix: The 2026 Adapter Vendor checklist

When choosing a hyper-network partner, evaluate them on these four axes:

- Weight Precision: Does the hyper-network output FP8 or FP16 adapters? (Hint: FP16 is required for high-stakes legal work).

- Base Compatibility: Does the vendor support the latest Llama and Qwen 3.5 architectures?

- Hot-swap Latency: What is the P99 time for VRAM loading? (Target: < 50ms).

- Sanitization Protocol: What automated checks are in place to prevent weight poisoning?

21. Conclusion: Compiled Knowledge is the New Moat

The Sakana AI breakthrough signals the end of the "Static Model" era. In 2026, intelligence is dynamic, adaptive, and instant.

The companies that thrive in the next 24 months will be those that stop viewing AI as a "Reference Book" and start viewing it as a "Shape-Shifting Tool." By using Doc-to-LoRA and Text-to-LoRA, you can build systems that don't just "Search" for information, but Become the information.

The MLOps bottleneck is dead. Long live the Adapter Era.

Resources for Developers

- Sakana AI Doc-to-LoRA Whitepaper

- Managing LoRA Clusters with S-LoRA

- Text-to-LoRA: A Practical Implementation Guide