Mission Creep: Hacked Data Reveals DHS Funding for Advanced AI Surveillance and Predictive Policing

Leaked data from a DHS technology incubator exposes multi-million dollar investments in automated airport surveillance and AI systems that turn national 911 data into predictive policing heat maps.

Mission Creep: Hacked Data Reveals DHS Funding for Advanced AI Surveillance and Predictive Policing

A massive data breach at a key tech-incubator for the U.S. Department of Homeland Security (DHS) has pulled back the curtain on the future of American domestic surveillance. The leaked files, published by an anonymous hacking collective this March, reveal a multi-million dollar shift toward AI-powered tracking—moving from border security into the deep fabric of daily life in American cities.

The revelation has sparked a fierce debate about "Mission Creep," where tools built for national defense are repurposed for civil law enforcement without the oversight of a comprehensive biometric privacy law.

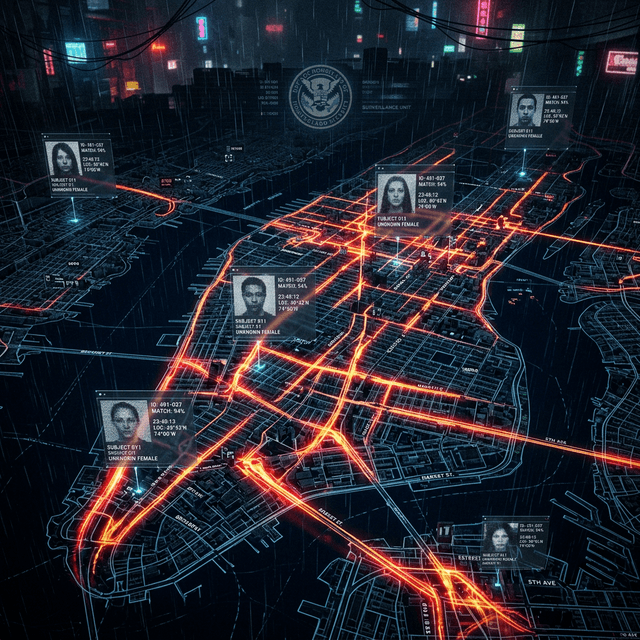

The "Geospatial Heat Map" of Human Intent

One of the most controversial projects revealed in the leak is a platform designed to ingest 911 call data across all 50 states in real-time. This AI doesn't just categorize calls; it uses Large Action Models (LAMs) to identify emerging patterns and overlay them onto high-definition "geospatial heat maps."

These maps don't just show where crime is happening; they use predictive policing algorithms to forecast where it might happen based on historical data, local social media sentiment, and even atmospheric conditions.

The Surveillance Pipeline

graph TD

A[Inputs: 911, SNS, Public CCTV] --> B{DHS AI Hub}

B --> C[Biometric ID: Gait & Face Recognition]

B --> D[Predictive Policing: Heat Maps]

B --> E[Behavioral Analysis: Stress Logic]

C --> F[Domestic Surveillance Portfolio]

D --> F

E --> F

F --> G[Real-time Patrol Allocation]

F --> H[Person-of-Interest Shadow Tracking]

Automated Airport Surveillance 2.0

The leaked documents also detail funding for the next generation of Automated Physical Surveillance (APS) at major US airports. This system moves beyond simple facial reconstruction. New AI models analyze CCTV feeds to track individuals based on:

- Gait Analysis: Identifying individuals by their unique walking tempo and stride.

- Clothing Brand Tracking: Cataloging what brands of clothing and equipment a person carries to build a "retail-preference profile."

- Bio-Logic Stress Monitoring: Using high-definition thermal imaging to detect minute increases in heart rate and skin temperature, flagged as markers for "anomalous stress."

DHS internal memos suggest these systems are "essential for preempting threats," but civil liberties groups warn that they are being deployed without mandatory agency-wide AI audits.

The UK Alternative: A Targeted Regulatory Path

As the US pushes for pervasive surveillance, the United Kingdom is taking a more surgical approach. On March 12, 2026, the UK government published reforms to its National Security and Investment Act (NSIA).

The UK will now remove "off-the-shelf" AI systems—the routine business tools—from mandatory security notification. Instead, it is sharpening its focus exclusively on entities researching "High-Capability Agentic AI." This targeted oversight aims to protect critical water and power utilities from state-sponsored cyberattacks while allowing the consumer AI market to scale naturally.

The Machine Bias Dilemma: A Self-Fulfilling Prophecy

The central technical and ethical concern highlighted by the DHS leak remains Machine Bias. Because predictive policing models are trained on historical crime data—which often reflects existing systemic prejudices—they tend to "predict" higher crime rates in minority-dense or economically disadvantaged neighborhoods.

This creates a feedback loop: the AI directs more police to a neighborhood, more arrests are made for minor infractions, and that data then "proves" to the AI that the neighborhood is high-risk. Breaking this loop is currently the primary focus of the 2026 AI Ethics Summit.

The Cybersecurity Irony

In perhaps the ultimate irony, the very DHS incubator that was funding these "impenetrable" surveillance systems was the victim of a massive data breach. Hackers reportedly used high-level jailbreaking techniques on standard LLM tools (like GPT-5 and Claude) to penetrate the incubator's servers and exfiltrate 150GB of classified project data.

This underscores the "Force Multiplier" effect of AI: as it becomes the primary shield for national security, it also becomes the most powerful sword for those wishing to dismantle it.

Conclusion

The March 2026 DHS leak has effectively ended the "stealth era" of American AI surveillance. As these systems move from the laboratory to the street corner, the American public must decide: Is a higher level of "homeland security" worth the cost of constant, algorithmic observation?

Investigative report synthesized from the "DHS-Incubator Leak 2026," UK Cabinet Office NSIA briefings, and ACLU digital privacy whitepapers.