The Traffic Controller of LLMs: A Developer’s Guide to AI Agent Routing

Master the art of agent routing. Learn how to design the 'traffic control' layer that directs requests to the right tools, models, and sub-agents.

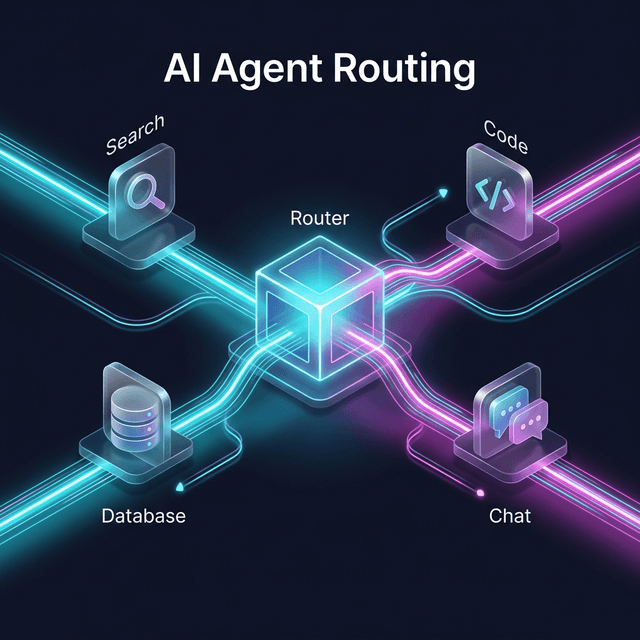

Artificial Intelligence has moved beyond simple input-output loops. We are now building complex multi-agent systems where several specialized entities must work together to solve a single problem. But how does a system know which agent or tool is the best fit for a user’s query?

The answer is Agent Routing.

Routing is the "traffic control" layer of your AI application. It classifies an incoming request and forwards it to the specific function, tool, or specialist agent best equipped to handle it. In this guide, we’ll explore four essential routing patterns with concrete code examples.

Why Do You Need Routing?

You typically implement a routing layer when:

- Multiple Tools: You have specific tools for search, code execution, RAG, or CRM access.

- Specialized Agents: You’ve built a "Researcher" agent, a "Coder" agent, and a "Customer Support" agent.

- Cost/Model Mixing: You want to use small, cheap models (like GPT-4o-mini) for easy tasks and heavy models (like Claude 3.5 Sonnet) for complex reasoning.

1. Rule‑Based Routing (Deterministic)

The simplest form of routing uses explicit, hard-coded rules. If the input matches pattern X, call tool Y. This is ideal for structured domains like order tracking or compliance-sensitive flows where you need 100% predictability.

Example: Order vs. Refund Routing

def is_order_query(text: str) -> bool:

return "order" in text.lower() and any(char.isdigit() for char in text)

def is_refund_request(text: str) -> bool:

return "refund" in text.lower()

def route(text: str) -> str:

if is_order_query(text):

return handle_order_status(text) # Call Order API

if is_refund_request(text):

return handle_refund(text) # Call Refund Workflow

return handle_general(text)

2. Semantic / LLM‑Based Routing

Instead of regex or keyword matching, you use an LLM (or an embedding model) to decide where to send the query based on its meaning. This allows for far more flexibility and can classify vague or conversational inputs.

Example: LLM as Classifier

def classify_route(user_input: str) -> str:

system_prompt = """

You are a routing agent. Decide the best destination for the request.

Return ONLY the label: faq, support, or sales.

- faq: general info, simple questions

- support: technical problems, account issues

- sales: pricing, plans, new purchases

"""

resp = llm.chat([

{"role": "system", "content": system_prompt},

{"role": "user", "content": user_input}

])

return resp.content.strip().lower()

# Usage

label = classify_route("How long is the warranty?") # Returns 'faq'

3. Hierarchical Routing (Manager–Worker)

In this pattern, a "Manager" agent receives the request, breaks it into subtasks, and delegates each part to specialized sub-agents. This is common for complex workflows like Research -> Planning -> Coding -> Testing.

Example: LangChain Manager Pattern

@tool

def delegate_to_research(query: str) -> str:

"""Use for information gathering or analysis."""

return research_executor.invoke({"input": query})["output"]

@tool

def delegate_to_actions(query: str) -> str:

"""Use for actions like creating tickets or sending emails."""

return action_executor.invoke({"input": query})["output"]

# The Router Prompt guides the LLM on how to use these tools

router_prompt = "If the user needs analysis, call research. If they need updates, call actions."

4. Auction / Bidding‑Based Routing

In advanced systems, all candidate agents see the task and "bid" with a score representing their confidence or cost. The router then picks the agent with the highest utility score.

Example: Confidence-Based Bidding

class CandidateAgent:

def __init__(self, name: str, scorer: Callable[[str], float], runner: Callable[[str], str]):

self.name = name

self.scorer = scorer # Returns 0.0 to 1.0 confidence

self.runner = runner

def route_with_auction(user_input: str, agents: List[CandidateAgent]):

scores = {a.name: a.scorer(user_input) for a in agents}

chosen_name = max(scores, key=scores.get)

return next(a for a in agents if a.name == chosen_name).runner(user_input)

Best Practices & Pitfalls

Design Tips

- Layered Approach: Start with rule-based routing for critical paths, then layer in LLM routing for the messy "long tail" of user inputs.

- Observability: Log every routing decision (input, chosen agent, and scores) to evaluate if the "Traffic Controller" is doing its job.

- Task Separation: Avoid having the router also answer the question. Its only job should be to point the ship in the right direction.

Common Mistakes

- Over‑routing: Don't send a simple "Hello" through a 5-step hierarchical research chain. It creates unnecessary latency and cost.

- Unclear Ownership: Ensure your agent descriptions are distinct. If two agents can both "handle refunds," the router will oscillate or behave inconsistently.

Choosing Your Strategy

| Scenario | Recommended Strategy |

|---|---|

| Simple FAQ vs. Tickets | Rule-based or LLM Classification |

| Strict Compliance | Rule-based + Narrow LLM Routing |

| Complex R&D Workflows | Hierarchical Manager–Worker |

| Massive multi-model systems | Auction/Bidding |

By mastering these routing patterns, you can evolve a single monolithic chatbot into a robust, observable, and high-performance multi-agent platform.